Exploring Feature Representation Learning for Semi-supervised Medical Image Segmentation

Paper and Code

Nov 22, 2021

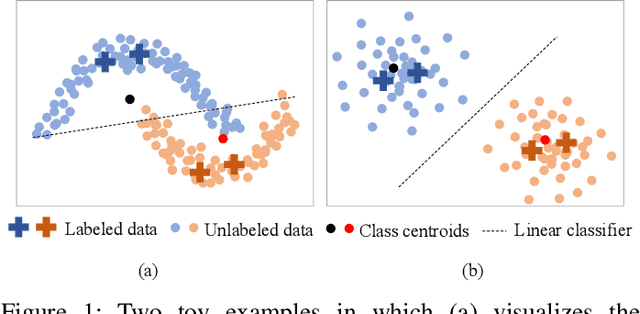

This paper presents a simple yet effective two-stage framework for semi-supervised medical image segmentation. Our key insight is to explore the feature representation learning with labeled and unlabeled (i.e., pseudo labeled) images to enhance the segmentation performance. In the first stage, we present an aleatoric uncertainty-aware method, namely AUA, to improve the segmentation performance for generating high-quality pseudo labels. Considering the inherent ambiguity of medical images, AUA adaptively regularizes the consistency on images with low ambiguity. To enhance the representation learning, we propose a stage-adaptive contrastive learning method, including a boundary-aware contrastive loss to regularize the labeled images in the first stage and a prototype-aware contrastive loss to optimize both labeled and pseudo labeled images in the second stage. The boundary-aware contrastive loss only optimizes pixels around the segmentation boundaries to reduce the computational cost. The prototype-aware contrastive loss fully leverages both labeled images and pseudo labeled images by building a centroid for each class to reduce computational cost for pair-wise comparison. Our method achieves the best results on two public medical image segmentation benchmarks. Notably, our method outperforms the prior state-of-the-art by 5.7% on Dice for colon tumor segmentation relying on just 5% labeled images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge