Explainable Convolutional Networks for Crater Detection and Lunar Landing Navigation

Paper and Code

Aug 24, 2024

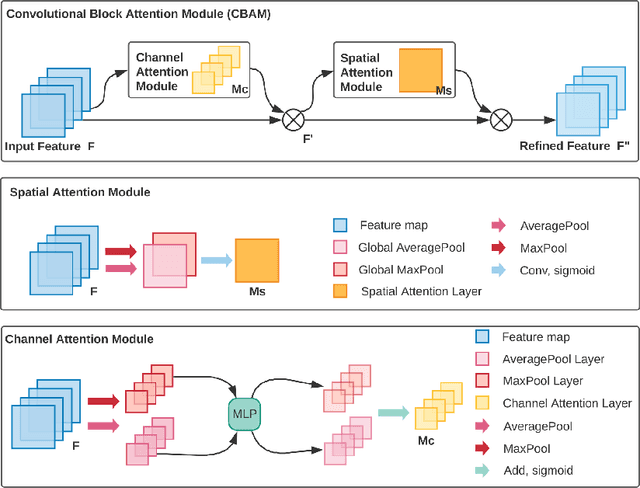

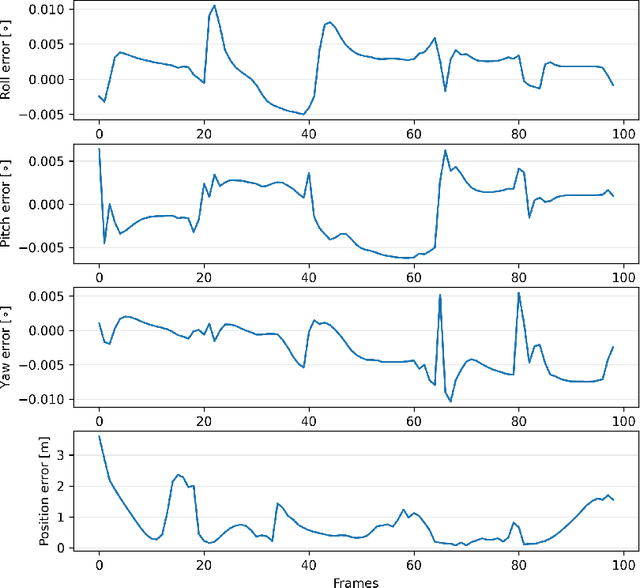

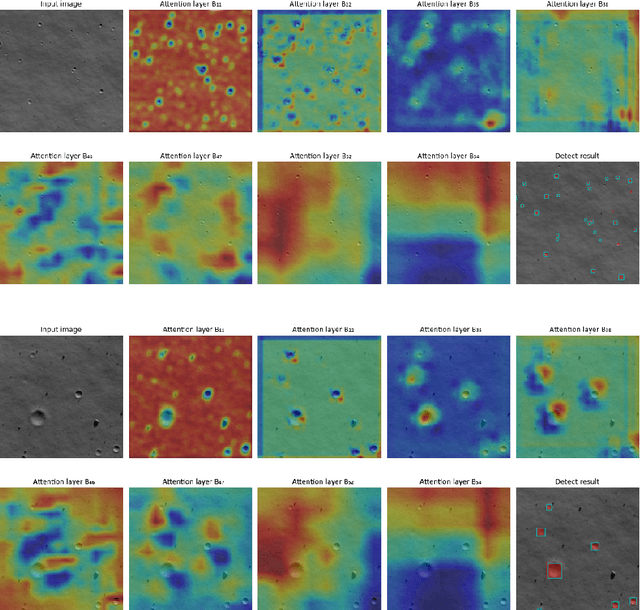

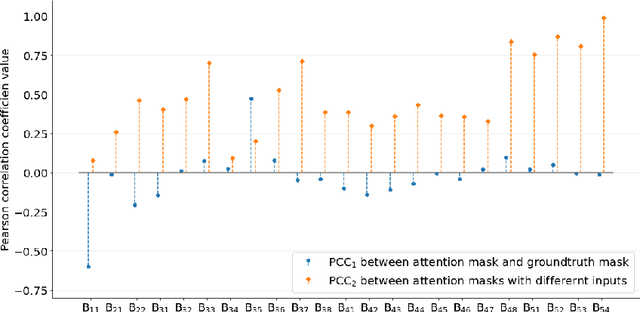

The Lunar landing has drawn great interest in lunar exploration in recent years, and autonomous lunar landing navigation is fundamental to this task. AI is expected to play a critical role in autonomous and intelligent space missions, yet human experts question the reliability of AI solutions. Thus, the \gls{xai} for vision-based lunar landing is studied in this paper, aiming at providing transparent and understandable predictions for intelligent lunar landing. Attention-based Darknet53 is proposed as the feature extraction structure. For crater detection and navigation tasks, attention-based YOLOv3 and attention-Darknet53-LSTM are presented respectively. The experimental results show that the offered networks provide competitive performance on relative crater detection and pose estimation during the lunar landing. The explainability of the provided networks is achieved by introducing an attention mechanism into the network during model building. Moreover, the PCC is utilised to quantitively evaluate the explainability of the proposed networks, with the findings showing the functions of various convolutional layers in the network.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge