Evolving Transferable Pruning Functions

Paper and Code

Oct 21, 2021

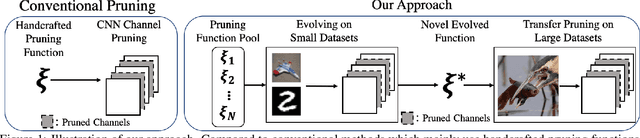

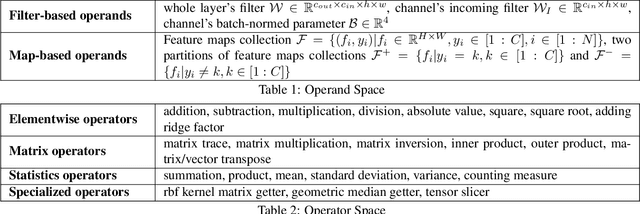

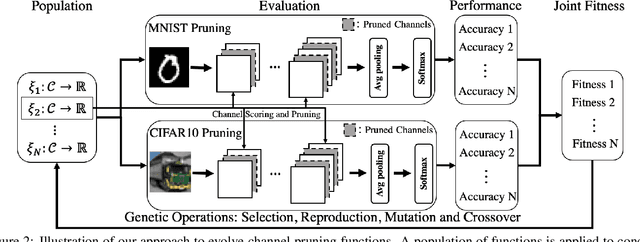

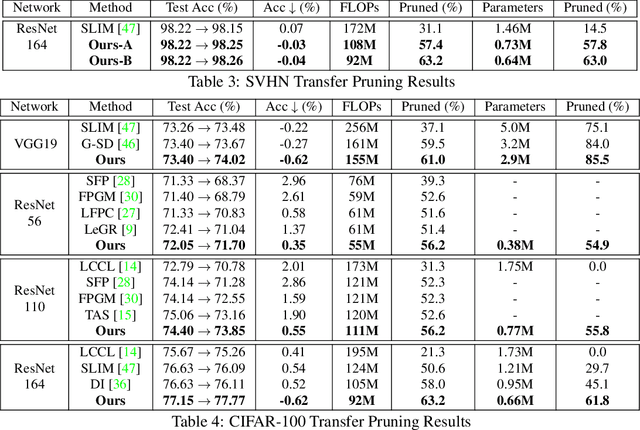

Channel pruning has made major headway in the design of efficient deep learning models. Conventional approaches adopt human-made pruning functions to score channels' importance for channel pruning, which requires domain knowledge and could be sub-optimal. In this work, we propose an end-to-end framework to automatically discover strong pruning metrics. Specifically, we craft a novel design space for expressing pruning functions and leverage an evolution strategy, genetic programming, to evolve high-quality and transferable pruning functions. Unlike prior methods, our approach can not only provide compact pruned networks for efficient inference, but also novel closed-form pruning metrics that are mathematically explainable and thus generalizable to different pruning tasks. The evolution is conducted on small datasets while the learned functions are transferable to larger datasets without any manual modification. Compared to direct evolution on a large dataset, our strategy shows better cost-effectiveness. When applied to more challenging datasets, different from those used in the evolution process, e.g., ILSVRC-2012, an evolved function achieves state-of-the-art pruning results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge