Enhancing Navigation Benchmarking and Perception Data Generation for Row-based Crops in Simulation

Paper and Code

Jun 27, 2023

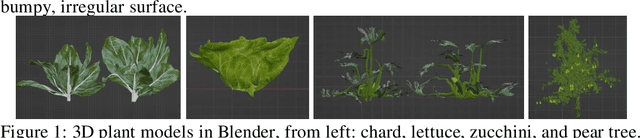

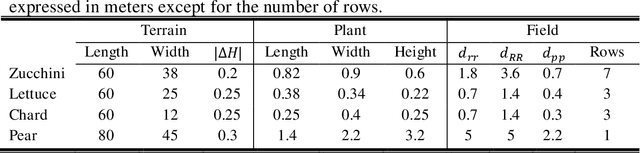

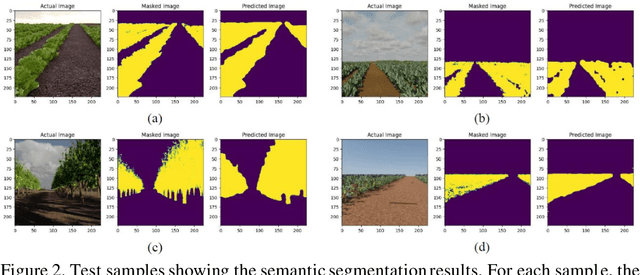

Service robotics is recently enhancing precision agriculture enabling many automated processes based on efficient autonomous navigation solutions. However, data generation and infield validation campaigns hinder the progress of large-scale autonomous platforms. Simulated environments and deep visual perception are spreading as successful tools to speed up the development of robust navigation with low-cost RGB-D cameras. In this context, the contribution of this work is twofold: a synthetic dataset to train deep semantic segmentation networks together with a collection of virtual scenarios for a fast evaluation of navigation algorithms. Moreover, an automatic parametric approach is developed to explore different field geometries and features. The simulation framework and the dataset have been evaluated by training a deep segmentation network on different crops and benchmarking the resulting navigation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge