End-to-end Learning for 3D Facial Animation from Raw Waveforms of Speech

Paper and Code

Dec 07, 2017

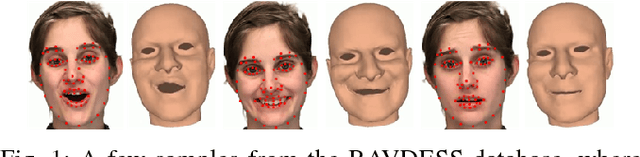

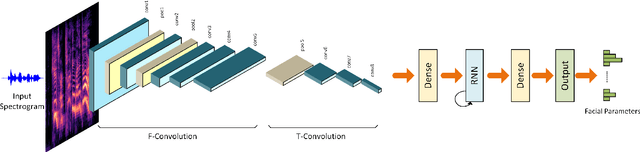

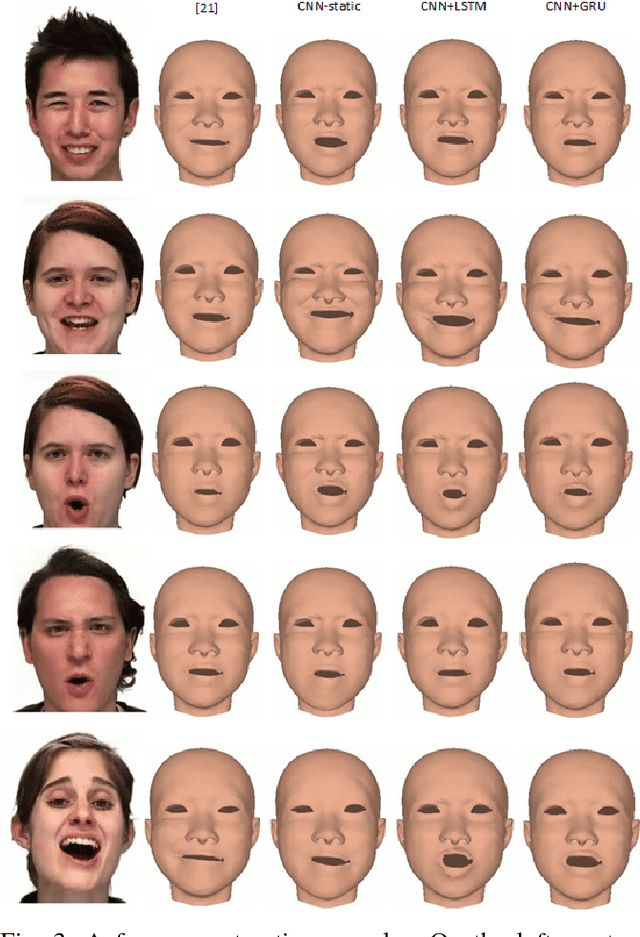

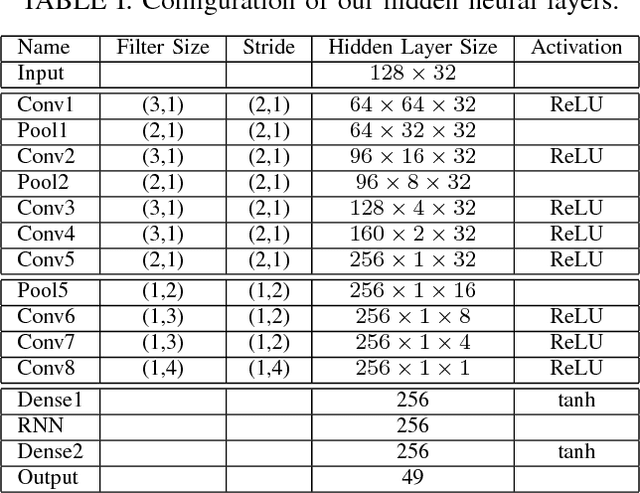

We present a deep learning framework for real-time speech-driven 3D facial animation from just raw waveforms. Our deep neural network directly maps an input sequence of speech audio to a series of micro facial action unit activations and head rotations to drive a 3D blendshape face model. In particular, our deep model is able to learn the latent representations of time-varying contextual information and affective states within the speech. Hence, our model not only activates appropriate facial action units at inference to depict different utterance generating actions, in the form of lip movements, but also, without any assumption, automatically estimates emotional intensity of the speaker and reproduces her ever-changing affective states by adjusting strength of facial unit activations. For example, in a happy speech, the mouth opens wider than normal, while other facial units are relaxed; or in a surprised state, both eyebrows raise higher. Experiments on a diverse audiovisual corpus of different actors across a wide range of emotional states show interesting and promising results of our approach. Being speaker-independent, our generalized model is readily applicable to various tasks in human-machine interaction and animation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge