Enabling Inference Privacy with Adaptive Noise Injection

Paper and Code

Apr 06, 2021

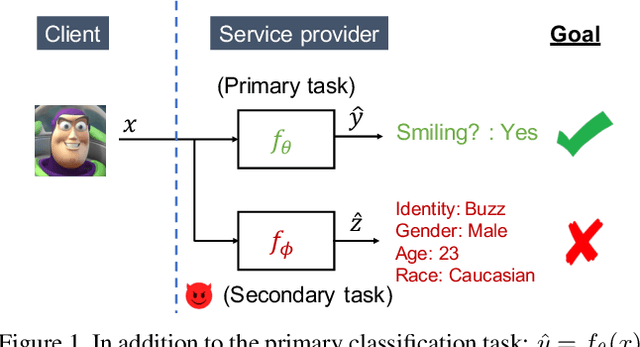

User-facing software services are becoming increasingly reliant on remote servers to host Deep Neural Network (DNN) models, which perform inference tasks for the clients. Such services require the client to send input data to the service provider, who processes it using a DNN and returns the output predictions to the client. Due to the rich nature of the inputs such as images and speech, the input often contains more information than what is necessary to perform the primary inference task. Consequently, in addition to the primary inference task, a malicious service provider could infer secondary (sensitive) attributes from the input, compromising the client's privacy. The goal of our work is to improve inference privacy by injecting noise to the input to hide the irrelevant features that are not conducive to the primary classification task. To this end, we propose Adaptive Noise Injection (ANI), which uses a light-weight DNN on the client-side to inject noise to each input, before transmitting it to the service provider to perform inference. Our key insight is that by customizing the noise to each input, we can achieve state-of-the-art trade-off between utility and privacy (up to 48.5% degradation in sensitive-task accuracy with <1% degradation in primary accuracy), significantly outperforming existing noise injection schemes. Our method does not require prior knowledge of the sensitive attributes and incurs minimal computational overheads.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge