Embedded Knowledge Distillation in Depth-level Dynamic Neural Network

Paper and Code

Mar 01, 2021

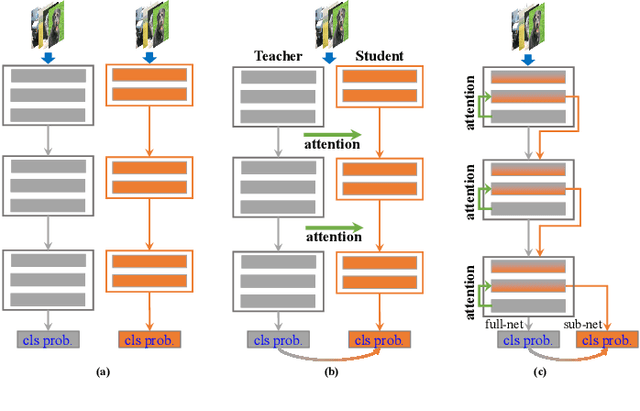

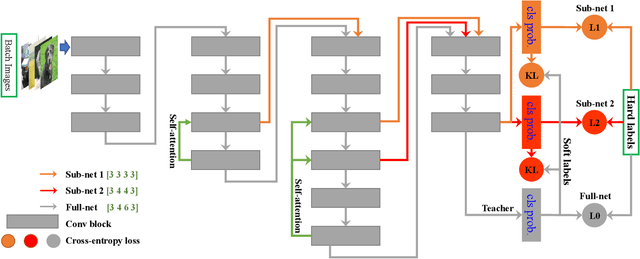

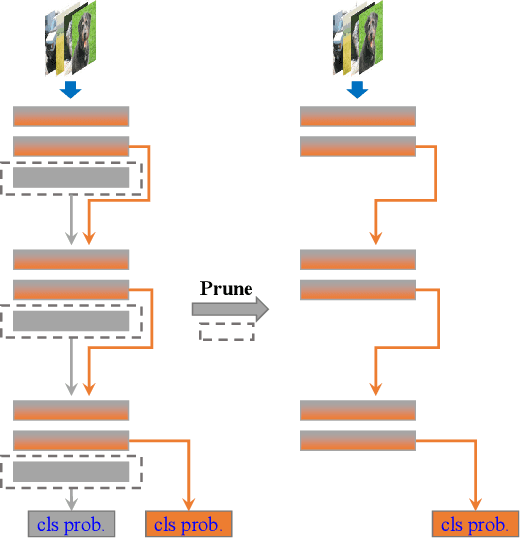

In real applications, different computation-resource devices need different-depth networks (e.g., ResNet-18/34/50) with high-accuracy. Usually, existing strategies either design multiple networks (nets) and train them independently, or utilize compression techniques (e.g., low-rank decomposition, pruning, and teacher-to-student) to evolve a trained large model into a small net. These methods are subject to the low-accuracy of small nets, or complicated training processes induced by the dependence of accompanying assistive large models. In this article, we propose an elegant Depth-level Dynamic Neural Network (DDNN) integrated different-depth sub-nets of similar architectures. Instead of training individual nets with different-depth configurations, we only train a DDNN to dynamically switch different-depth sub-nets at runtime using one set of shared weight parameters. To improve the generalization of sub-nets, we design the Embedded-Knowledge-Distillation (EKD) training mechanism for the DDNN to implement semantic knowledge transfer from the teacher (full) net to multiple sub-nets. Specifically, the Kullback-Leibler divergence is introduced to constrain the posterior class probability consistency between full-net and sub-net, and self-attention on the same resolution feature of different depth is addressed to drive more abundant feature representations of sub-nets. Thus, we can obtain multiple high accuracy sub-nets simultaneously in a DDNN via the online knowledge distillation in each training iteration without extra computation cost. Extensive experiments on CIFAR-10, CIFAR-100, and ImageNet datasets demonstrate that sub-nets in DDNN with EKD training achieves better performance than the depth-level pruning or individually training while preserving the original performance of full-net.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge