Elastic Consistency: A General Consistency Model for Distributed Stochastic Gradient Descent

Paper and Code

Jan 16, 2020

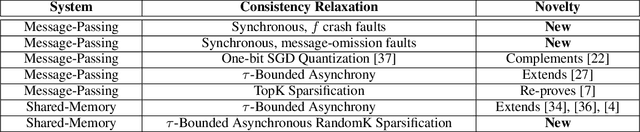

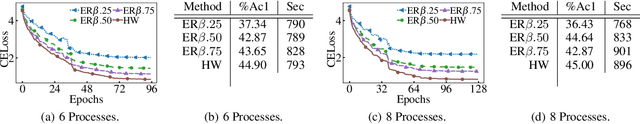

Machine learning has made tremendous progress in recent years, with models matching or even surpassing humans on a series of specialized tasks. One key element behind the progress of machine learning in recent years has been the ability to train machine learning models in large-scale distributed shared-memory and message-passing environments. Many of these models are trained employing variants of stochastic gradient descent (SGD) based optimization. In this paper, we introduce a general consistency condition covering communication-reduced and asynchronous distributed SGD implementations. Our framework, called elastic consistency enables us to derive convergence bounds for a variety of distributed SGD methods used in practice to train large-scale machine learning models. The proposed framework de-clutters the implementation-specific convergence analysis and provides an abstraction to derive convergence bounds. We utilize the framework to analyze a sparsification scheme for distributed SGD methods in an asynchronous setting for convex and non-convex objectives. We implement the distributed SGD variant to train deep CNN models in an asynchronous shared-memory setting. Empirical results show that error-feedback may not necessarily help in improving the convergence of sparsified asynchronous distributed SGD, which corroborates an insight suggested by our convergence analysis.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge