EgoTransfer: Transferring Motion Across Egocentric and Exocentric Domains using Deep Neural Networks

Paper and Code

Dec 17, 2016

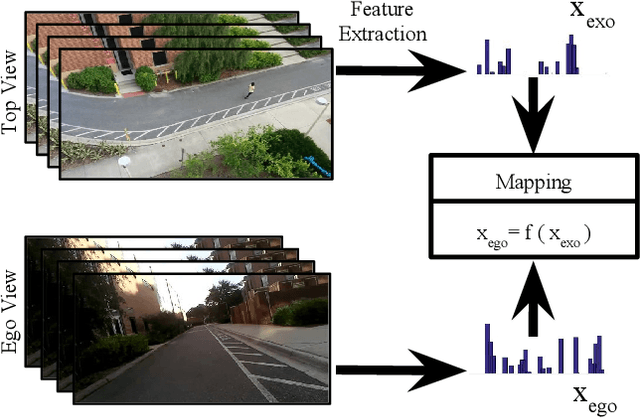

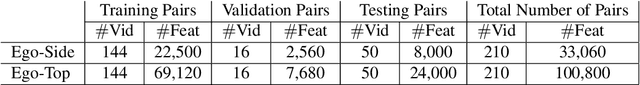

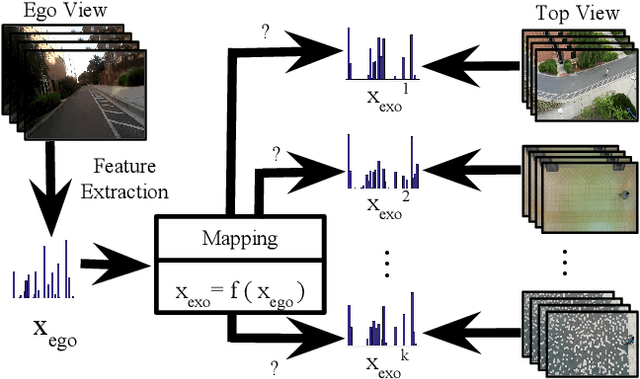

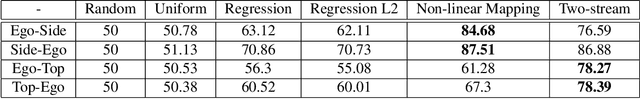

Mirror neurons have been observed in the primary motor cortex of primate species, in particular in humans and monkeys. A mirror neuron fires when a person performs a certain action, and also when he observes the same action being performed by another person. A crucial step towards building fully autonomous intelligent systems with human-like learning abilities is the capability in modeling the mirror neuron. On one hand, the abundance of egocentric cameras in the past few years has offered the opportunity to study a lot of vision problems from the first-person perspective. A great deal of interesting research has been done during the past few years, trying to explore various computer vision tasks from the perspective of the self. On the other hand, videos recorded by traditional static cameras, capture humans performing different actions from an exocentric third-person perspective. In this work, we take the first step towards relating motion information across these two perspectives. We train models that predict motion in an egocentric view, by observing it from an exocentric view, and vice versa. This allows models to predict how an egocentric motion would look like from outside. To do so, we train linear and nonlinear models and evaluate their performance in terms of retrieving the egocentric (exocentric) motion features, while having access to an exocentric (egocentric) motion feature. Our experimental results demonstrate that motion information can be successfully transferred across the two views.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge