Efficient transfer learning and online adaptation with latent variable models for continuous control

Paper and Code

Dec 08, 2018

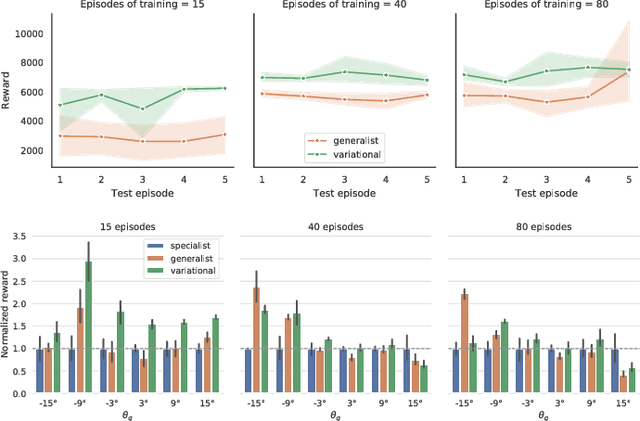

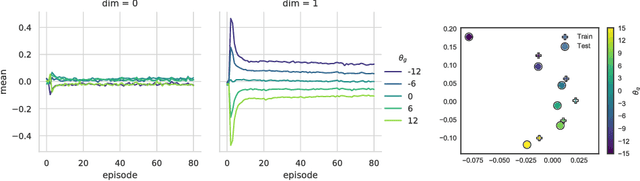

Traditional model-based RL relies on hand-specified or learned models of transition dynamics of the environment. These methods are sample efficient and facilitate learning in the real world but fail to generalize to subtle variations in the underlying dynamics, e.g., due to differences in mass, friction, or actuators across robotic agents or across time. We propose using variational inference to learn an explicit latent representation of unknown environment properties that accelerates learning and facilitates generalization on novel environments at test time. We use Online Bayesian Inference of these learned latents to rapidly adapt online to changes in environments without retaining large replay buffers of recent data. Combined with a neural network ensemble that models dynamics and captures uncertainty over dynamics, our approach demonstrates positive transfer during training and online adaptation on the continuous control task HalfCheetah.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge