Efficient Density Estimation via Piecewise Polynomial Approximation

Paper and Code

May 14, 2013

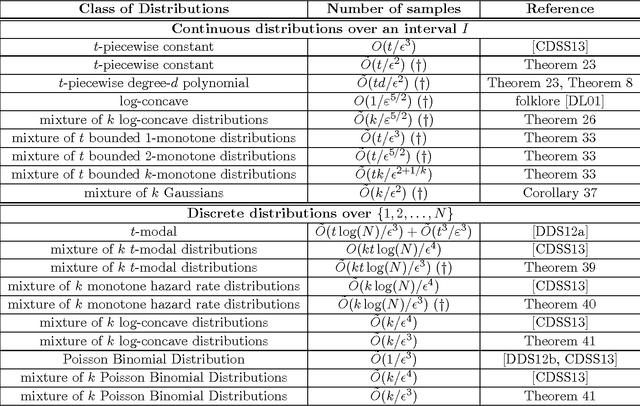

We give a highly efficient "semi-agnostic" algorithm for learning univariate probability distributions that are well approximated by piecewise polynomial density functions. Let $p$ be an arbitrary distribution over an interval $I$ which is $\tau$-close (in total variation distance) to an unknown probability distribution $q$ that is defined by an unknown partition of $I$ into $t$ intervals and $t$ unknown degree-$d$ polynomials specifying $q$ over each of the intervals. We give an algorithm that draws $\tilde{O}(t\new{(d+1)}/\eps^2)$ samples from $p$, runs in time $\poly(t,d,1/\eps)$, and with high probability outputs a piecewise polynomial hypothesis distribution $h$ that is $(O(\tau)+\eps)$-close (in total variation distance) to $p$. This sample complexity is essentially optimal; we show that even for $\tau=0$, any algorithm that learns an unknown $t$-piecewise degree-$d$ probability distribution over $I$ to accuracy $\eps$ must use $\Omega({\frac {t(d+1)} {\poly(1 + \log(d+1))}} \cdot {\frac 1 {\eps^2}})$ samples from the distribution, regardless of its running time. Our algorithm combines tools from approximation theory, uniform convergence, linear programming, and dynamic programming. We apply this general algorithm to obtain a wide range of results for many natural problems in density estimation over both continuous and discrete domains. These include state-of-the-art results for learning mixtures of log-concave distributions; mixtures of $t$-modal distributions; mixtures of Monotone Hazard Rate distributions; mixtures of Poisson Binomial Distributions; mixtures of Gaussians; and mixtures of $k$-monotone densities. Our general technique yields computationally efficient algorithms for all these problems, in many cases with provably optimal sample complexities (up to logarithmic factors) in all parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge