Editing Factual Knowledge in Language Models

Paper and Code

Apr 16, 2021

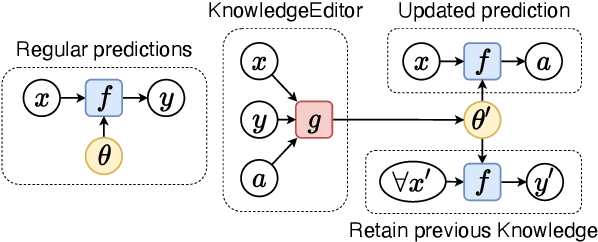

The factual knowledge acquired during pretraining and stored in the parameters of Language Models (LM) can be useful in downstream tasks (e.g., question answering or textual inference). However, some facts can be incorrectly induced or become obsolete over time. We present KnowledgeEditor, a method that can be used to edit this knowledge and, thus, fix 'bugs' or unexpected predictions without the need for expensive re-training or fine-tuning. Besides being computationally efficient, KnowledgeEditor does not require any modifications in LM pre-training (e.g., the use of meta-learning). In our approach, we train a hyper-network with constrained optimization to modify a fact without affecting the rest of the knowledge; the trained hyper-network is then used to predict the weight update at test time. We show KnowledgeEditor's efficacy with two popular architectures and knowledge-intensive tasks: i) a BERT model fine-tuned for fact-checking, and ii) a sequence-to-sequence BART model for question answering. With our method, changing a prediction on the specific wording of a query tends to result in a consistent change in predictions also for its paraphrases. We show that this can be further encouraged by exploiting (e.g., automatically-generated) paraphrases during training. Interestingly, our hyper-network can be regarded as a 'probe' revealing which components of a model need to be changed to manipulate factual knowledge; our analysis shows that the updates tend to be concentrated on a small subset of components. Code at https://github.com/nicola-decao/KnowledgeEditor

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge