Dynamic Competency Self-Assessment for Autonomous Agents

Paper and Code

Mar 03, 2023

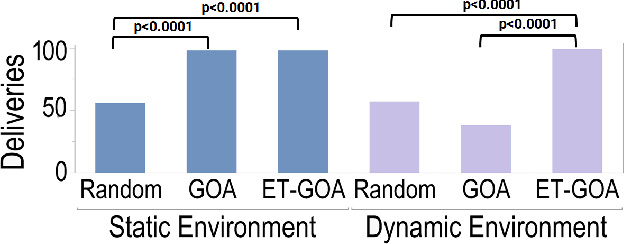

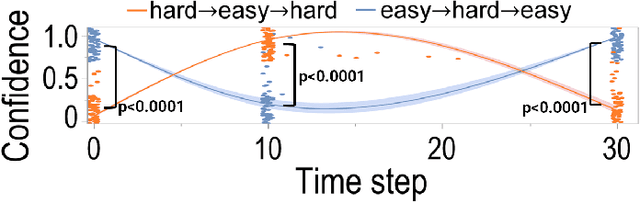

As autonomous robots are deployed in increasingly complex environments, platform degradation, environmental uncertainties, and deviations from validated operation conditions can make it difficult for human partners to understand robot capabilities and limitations. The ability for a robot to self-assess its competency in dynamic and uncertain environments will be a crucial next step in successful human-robot teaming. This work presents and evaluates an Event-Triggered Generalized Outcome Assessment (ET-GOA) algorithm for autonomous agents to dynamically assess task confidence during execution. The algorithm uses a fast online statistical test of the agent's observations and its model predictions to decide when competency assessment is needed. We provide experimental results using ET-GOA to generate competency reports during a simulated delivery task and suggest future research directions for self-assessing agents.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge