DropCluster: A structured dropout for convolutional networks

Paper and Code

Feb 07, 2020

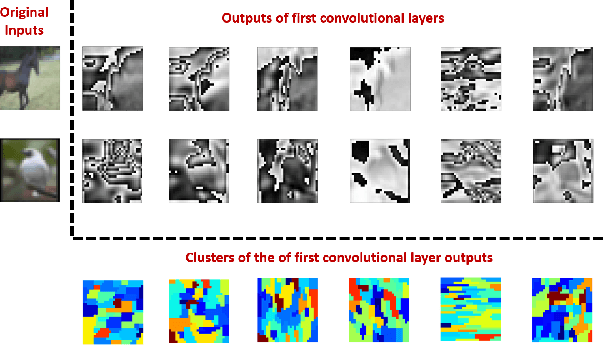

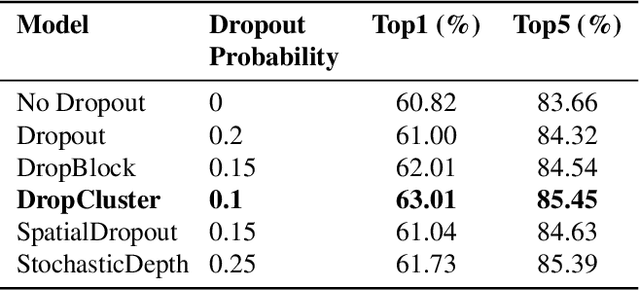

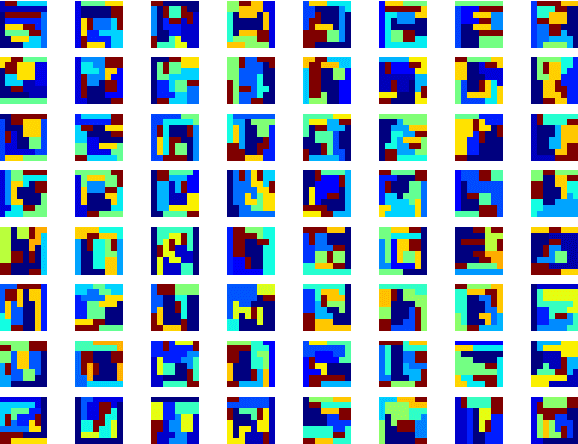

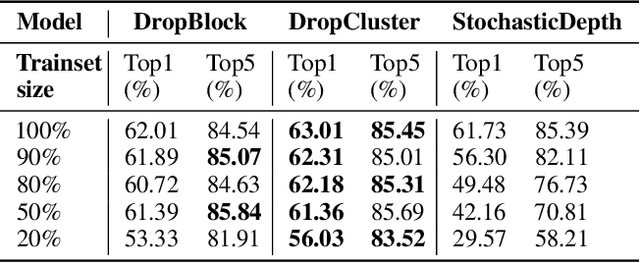

Dropout as a regularizer in deep neural networks has been less effective in convolutional layers than in fully connected layers. This is due to the fact that dropout drops features randomly. When features are spatially correlated as in the case of convolutional layers, information about the dropped pixels can still propagate to the next layers via neighboring pixels. In order to address this problem, more structured forms of dropout have been proposed. A drawback of these methods is that they do not adapt to the data. In this work, we introduce a novel structured regularization for convolutional layers, which we call DropCluster. Our regularizer relies on data-driven structure. It finds clusters of correlated features in convolutional layer outputs and drops the clusters randomly at each iteration. The clusters are learned and updated during model training so that they adapt both to the data and to the model weights. Our experiments on the ResNet-50 architecture demonstrate that our approach achieves better performance than DropBlock or other existing structured dropout variants. We also demonstrate the robustness of our approach when the size of training data is limited and when there is corruption in the data at test time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge