Domain Adaptation and Image Classification via Deep Conditional Adaptation Network

Paper and Code

Jun 14, 2020

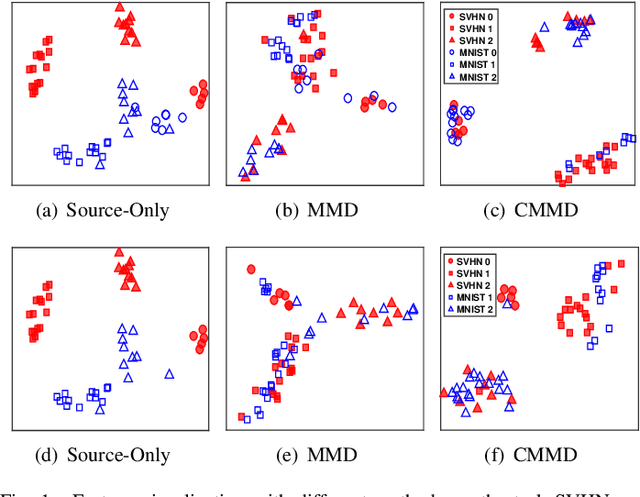

Unsupervised domain adaptation aims to generalize the supervised model trained on a source domain to an unlabeled target domain. Marginal distribution alignment of feature spaces is widely used to reduce the domain discrepancy between the source and target domains. However, it assumes that the source and target domains share the same label distribution, which limits their application scope. In this paper, we consider a more general application scenario where the label distributions of the source and target domains are not the same. In this scenario, marginal distribution alignment-based methods will be vulnerable to negative transfer. To address this issue, we propose a novel unsupervised domain adaptation method, Deep Conditional Adaptation Network (DCAN), based on conditional distribution alignment of feature spaces. To be specific, we reduce the domain discrepancy by minimizing the Conditional Maximum Mean Discrepancy between the conditional distributions of deep features on the source and target domains, and extract the discriminant information from target domain by maximizing the mutual information between samples and the prediction labels. In addition, DCAN can be used to address a special scenario, Partial unsupervised domain adaptation, where the target domain category is a subset of the source domain category. Experiments on both unsupervised domain adaptation and Partial unsupervised domain adaptation show that DCAN achieves superior classification performance over state-of-the-art methods. In particular, DCAN achieves great improvement in the tasks with large difference in label distributions (6.1\% on SVHN to MNIST, 5.4\% in UDA tasks on Office-Home and 4.5\% in Partial UDA tasks on Office-Home).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge