Dolphin: A Challenging and Diverse Benchmark for Arabic NLG

Paper and Code

May 24, 2023

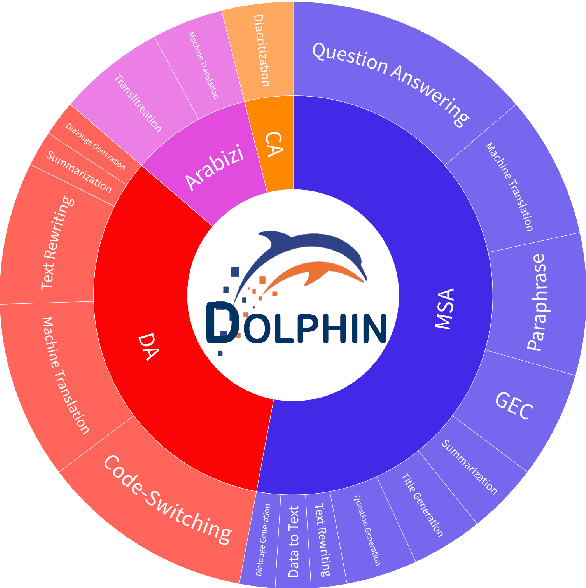

We present Dolphin, a novel benchmark that addresses the need for an evaluation framework for the wide collection of Arabic languages and varieties. The proposed benchmark encompasses a broad range of 13 different NLG tasks, including text summarization, machine translation, question answering, and dialogue generation, among others. Dolphin comprises a substantial corpus of 40 diverse and representative public datasets across 50 test splits, carefully curated to reflect real-world scenarios and the linguistic richness of Arabic. It sets a new standard for evaluating the performance and generalization capabilities of Arabic and multilingual models, promising to enable researchers to push the boundaries of current methodologies. We provide an extensive analysis of Dolphin, highlighting its diversity and identifying gaps in current Arabic NLG research. We also evaluate several Arabic and multilingual models on our benchmark, allowing us to set strong baselines against which researchers can compare.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge