Disentangling Interpretable Generative Parameters of Random and Real-World Graphs

Paper and Code

Nov 06, 2019

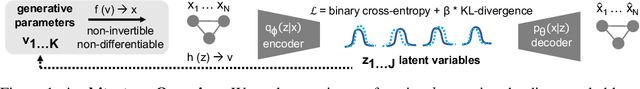

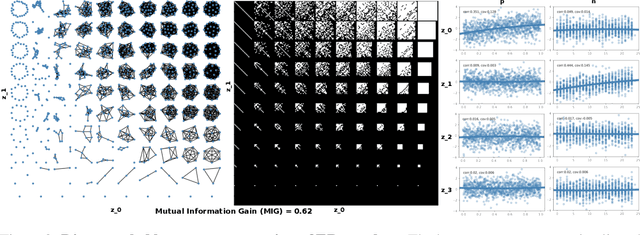

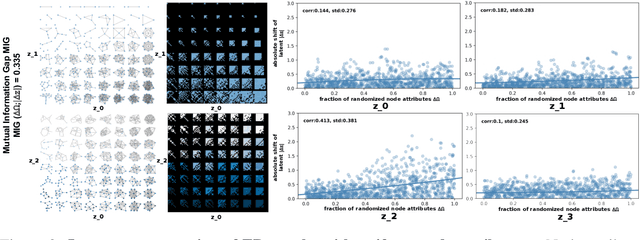

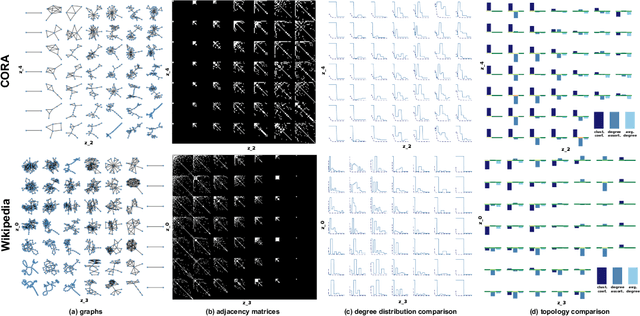

While a wide range of interpretable generative procedures for graphs exist, matching observed graph topologies with such procedures and choices for its parameters remains an open problem. Devising generative models that closely reproduce real-world graphs requires domain knowledge and time-consuming simulation. While existing deep learning approaches rely on less manual modelling, they offer little interpretability. This work approaches graph generation (decoding) as the inverse of graph compression (encoding). We show that in a disentanglement-focused deep autoencoding framework, specifically Beta-Variational Autoencoders (Beta-VAE), choices of generative procedures and their parameters arise naturally in the latent space. Our model is capable of learning disentangled, interpretable latent variables that represent the generative parameters of procedurally generated random graphs and real-world graphs. The degree of disentanglement is quantitatively measured using the Mutual Information Gap (MIG). When training our Beta-VAE model on ER random graphs, its latent variables have a near one-to-one mapping to the ER random graph parameters n and p. We deploy the model to analyse the correlation between graph topology and node attributes measuring their mutual dependence without handpicking topological properties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge