DisCo: Remedy Self-supervised Learning on Lightweight Models with Distilled Contrastive Learning

Paper and Code

Apr 19, 2021

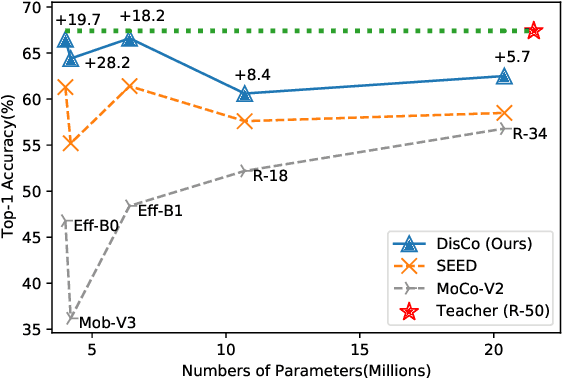

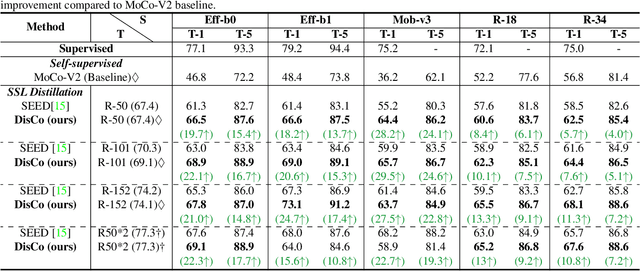

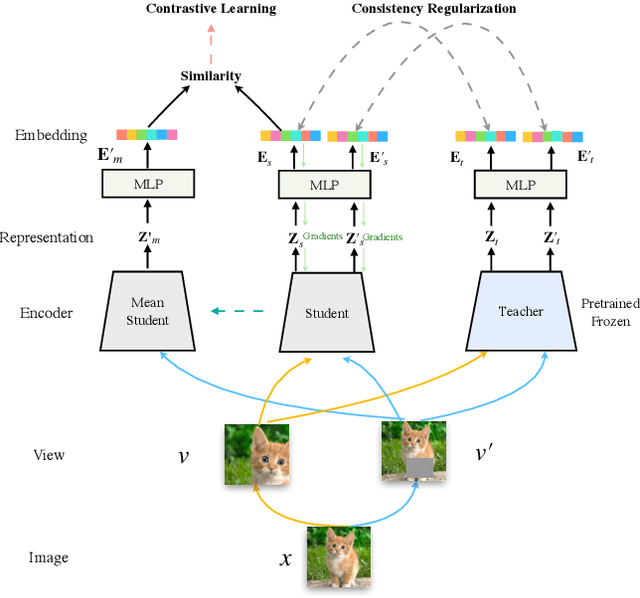

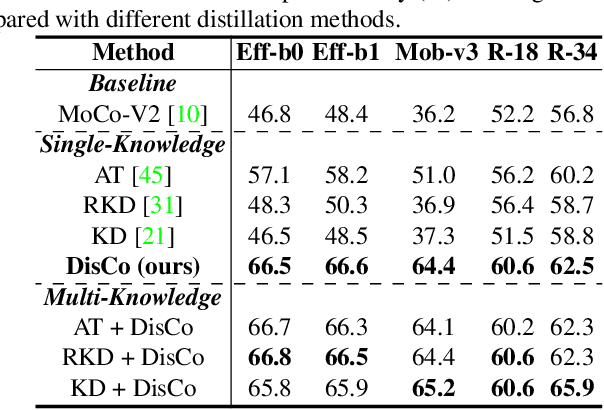

While self-supervised representation learning (SSL) has received widespread attention from the community, recent research argue that its performance will suffer a cliff fall when the model size decreases. The current method mainly relies on contrastive learning to train the network and in this work, we propose a simple yet effective Distilled Contrastive Learning (DisCo) to ease the issue by a large margin. Specifically, we find the final embedding obtained by the mainstream SSL methods contains the most fruitful information, and propose to distill the final embedding to maximally transmit a teacher's knowledge to a lightweight model by constraining the last embedding of the student to be consistent with that of the teacher. In addition, in the experiment, we find that there exists a phenomenon termed Distilling BottleNeck and present to enlarge the embedding dimension to alleviate this problem. Our method does not introduce any extra parameter to lightweight models during deployment. Experimental results demonstrate that our method achieves the state-of-the-art on all lightweight models. Particularly, when ResNet-101/ResNet-50 is used as teacher to teach EfficientNet-B0, the linear result of EfficientNet-B0 on ImageNet is very close to ResNet-101/ResNet-50, but the number of parameters of EfficientNet-B0 is only 9.4%/16.3% of ResNet-101/ResNet-50.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge