Directional Deep Embedding and Appearance Learning for Fast Video Object Segmentation

Paper and Code

Feb 17, 2020

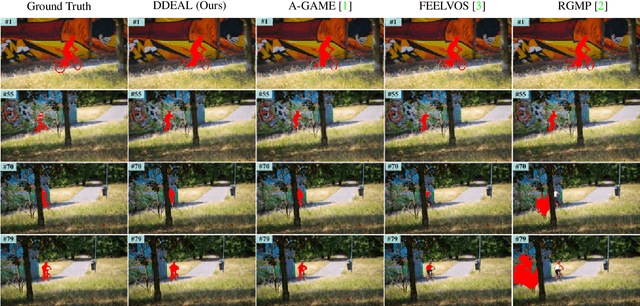

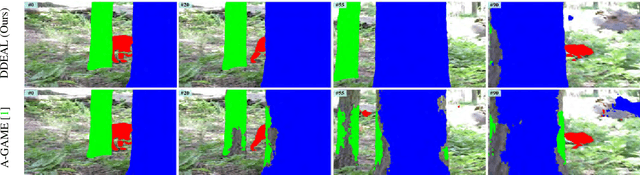

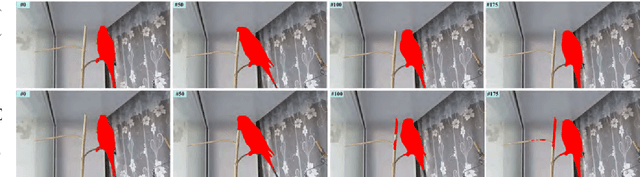

Most recent semi-supervised video object segmentation (VOS) methods rely on fine-tuning deep convolutional neural networks online using the given mask of the first frame or predicted masks of subsequent frames. However, the online fine-tuning process is usually time-consuming, limiting the practical use of such methods. We propose a directional deep embedding and appearance learning (DDEAL) method, which is free of the online fine-tuning process, for fast VOS. First, a global directional matching module, which can be efficiently implemented by parallel convolutional operations, is proposed to learn a semantic pixel-wise embedding as an internal guidance. Second, an effective directional appearance model based statistics is proposed to represent the target and background on a spherical embedding space for VOS. Equipped with the global directional matching module and the directional appearance model learning module, DDEAL learns static cues from the labeled first frame and dynamically updates cues of the subsequent frames for object segmentation. Our method exhibits state-of-the-art VOS performance without using online fine-tuning. Specifically, it achieves a J & F mean score of 74.8% on DAVIS 2017 dataset and an overall score G of 71.3% on the large-scale YouTube-VOS dataset, while retaining a speed of 25 fps with a single NVIDIA TITAN Xp GPU. Furthermore, our faster version runs 31 fps with only a little accuracy loss. Our code and trained networks are available at https://github.com/YingjieYin/Directional-Deep-Embedding-and-Appearance-Learning-for-Fast-Video-Object-Segmentation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge