Diminishing Empirical Risk Minimization for Unsupervised Anomaly Detection

Paper and Code

May 29, 2022

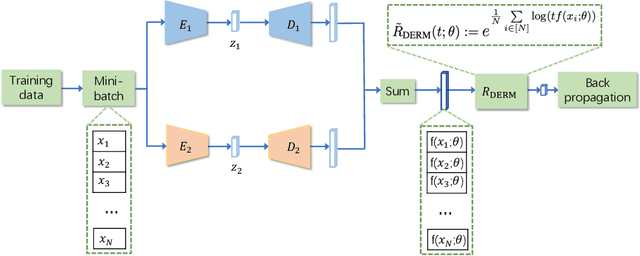

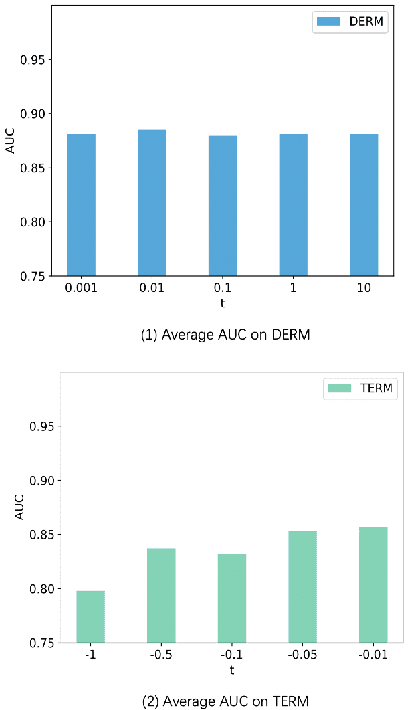

Unsupervised anomaly detection (AD) is a challenging task in realistic applications. Recently, there is an increasing trend to detect anomalies with deep neural networks (DNN). However, most popular deep AD detectors cannot protect the network from learning contaminated information brought by anomalous data, resulting in unsatisfactory detection performance and overfitting issues. In this work, we identify one reason that hinders most existing DNN-based anomaly detection methods from performing is the wide adoption of the Empirical Risk Minimization (ERM). ERM assumes that the performance of an algorithm on an unknown distribution can be approximated by averaging losses on the known training set. This averaging scheme thus ignores the distinctions between normal and anomalous instances. To break through the limitations of ERM, we propose a novel Diminishing Empirical Risk Minimization (DERM) framework. Specifically, DERM adaptively adjusts the impact of individual losses through a well-devised aggregation strategy. Theoretically, our proposed DERM can directly modify the gradient contribution of each individual loss in the optimization process to suppress the influence of outliers, leading to a robust anomaly detector. Empirically, DERM outperformed the state-of-the-art on the unsupervised AD benchmark consisting of 18 datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge