Developing Constrained Neural Units Over Time

Paper and Code

Sep 01, 2020

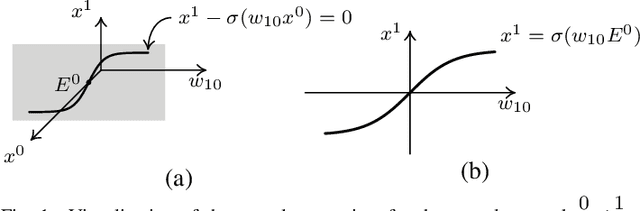

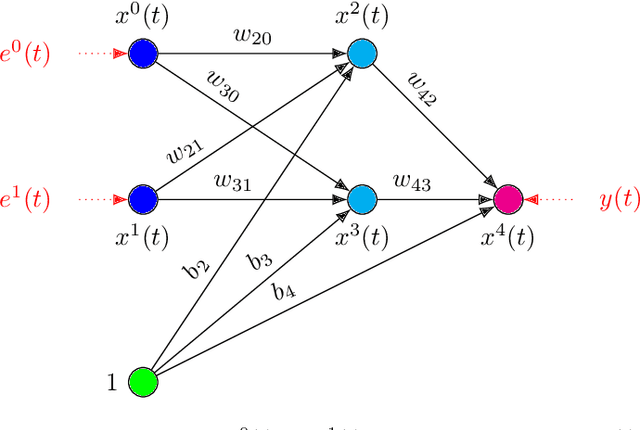

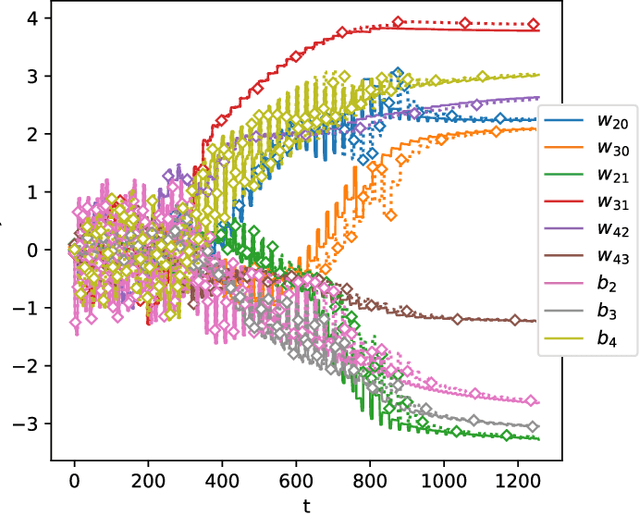

In this paper we present a foundational study on a constrained method that defines learning problems with Neural Networks in the context of the principle of least cognitive action, which very much resembles the principle of least action in mechanics. Starting from a general approach to enforce constraints into the dynamical laws of learning, this work focuses on an alternative way of defining Neural Networks, that is different from the majority of existing approaches. In particular, the structure of the neural architecture is defined by means of a special class of constraints that are extended also to the interaction with data, leading to "architectural" and "input-related" constraints, respectively. The proposed theory is cast into the time domain, in which data are presented to the network in an ordered manner, that makes this study an important step toward alternative ways of processing continuous streams of data with Neural Networks. The connection with the classic Backpropagation-based update rule of the weights of networks is discussed, showing that there are conditions under which our approach degenerates to Backpropagation. Moreover, the theory is experimentally evaluated on a simple problem that allows us to deeply study several aspects of the theory itself and to show the soundness of the model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge