Deterministic error bounds for kernel-based learning techniques under bounded noise

Paper and Code

Aug 10, 2020

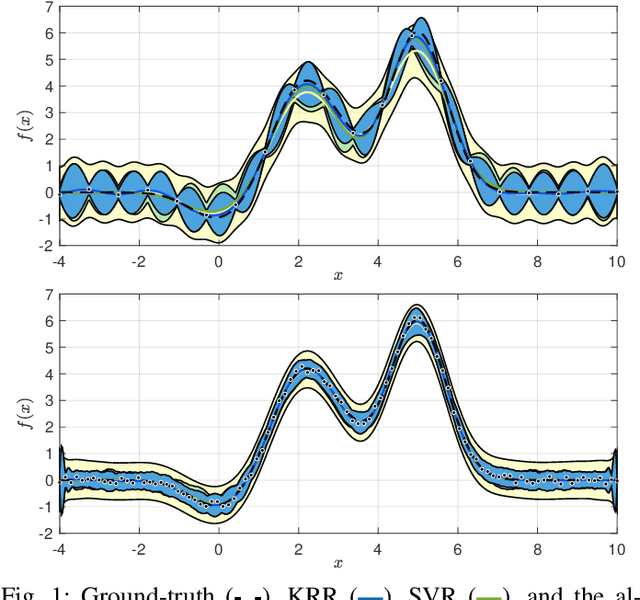

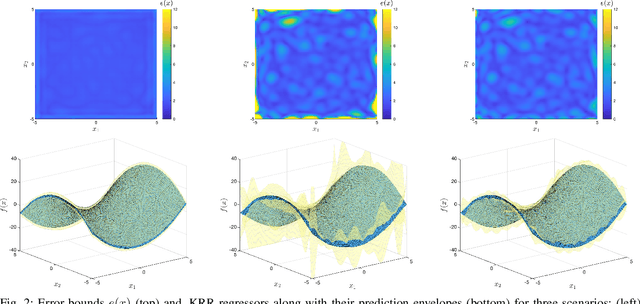

We consider the problem of reconstructing a function from a finite set of noise-corrupted samples. Two kernel algorithms are analyzed, namely kernel ridge regression and $\varepsilon$-support vector regression. By assuming the ground-truth function belongs to the reproducing kernel Hilbert space of the chosen kernel, and the measurement noise affecting the dataset is bounded, we adopt an approximation theory viewpoint to establish \textit{deterministic} error bounds for the two models. Finally, we discuss their connection with Gaussian processes and two numerical examples are provided. In establishing our inequalities, we hope to help bring the fields of non-parametric kernel learning and robust control closer to each other.

* 9 pages, 2 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge