Defending Against Backdoors in Federated Learning with Robust Learning Rate

Paper and Code

Jul 07, 2020

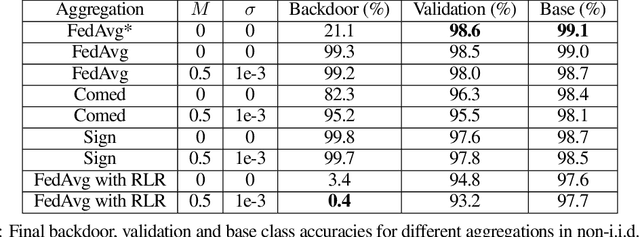

Federated Learning (FL) allows a set of agents to collaboratively train a model in a decentralized fashion without sharing their potentially sensitive data. This makes FL suitable for privacy-preserving applications. At the same time, FL is susceptible to adversarial attacks due to decentralized and unvetted data. One important line of attacks against FL is the backdoor attacks. In a backdoor attack, an adversary tries to embed a backdoor trigger functionality to the model during training which can later be activated to cause a desired misclassification. To prevent such backdoor attacks, we propose a lightweight defense that requires no change to the FL structure. At a high level, our defense is based on carefully adjusting the server's learning rate, per dimension, at each round based on the sign information of agent's updates. We first conjecture the necessary steps to carry a successful backdoor attack in FL setting, and then, explicitly formulate the defense based on our conjecture. Through experiments, we provide empirical evidence to the support of our conjecture. We test our defense against backdoor attacks under different settings, and, observe that either backdoor is completely eliminated, or its accuracy is significantly reduced. Overall, our experiments suggests that our approach significantly outperforms some of the recently proposed defenses in the literature. We achieve this by having minimal influence over the accuracy of the trained models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge