Deep-PowerX: A Deep Learning-Based Framework for Low-Power Approximate Logic Synthesis

Paper and Code

Jul 03, 2020

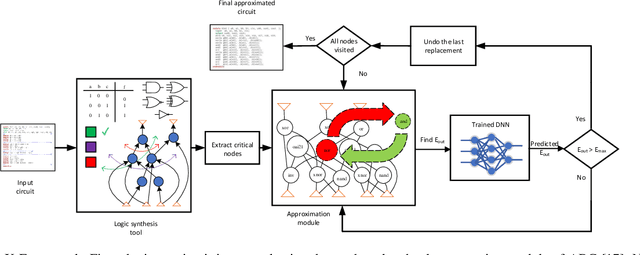

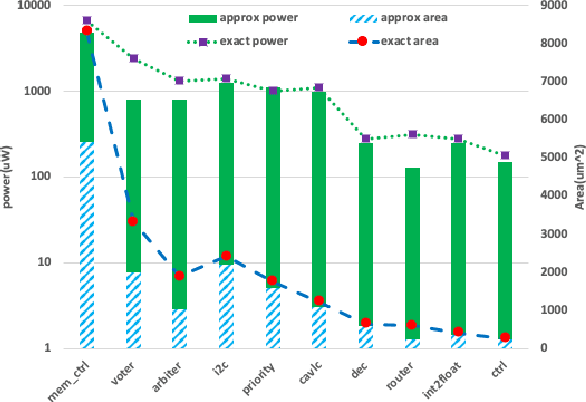

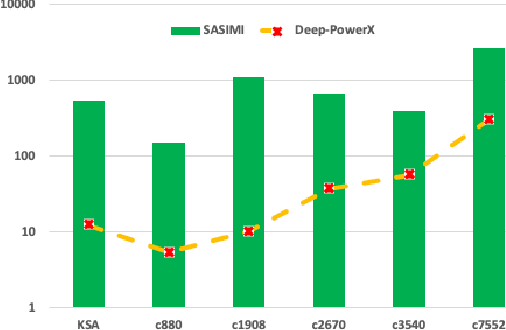

This paper aims at integrating three powerful techniques namely Deep Learning, Approximate Computing, and Low Power Design into a strategy to optimize logic at the synthesis level. We utilize advances in deep learning to guide an approximate logic synthesis engine to minimize the dynamic power consumption of a given digital CMOS circuit, subject to a predetermined error rate at the primary outputs. Our framework, Deep-PowerX, focuses on replacing or removing gates on a technology-mapped network and uses a Deep Neural Network (DNN) to predict error rates at primary outputs of the circuit when a specific part of the netlist is approximated. The primary goal of Deep-PowerX is to reduce the dynamic power whereas area reduction serves as a secondary objective. Using the said DNN, Deep-PowerX is able to reduce the exponential time complexity of standard approximate logic synthesis to linear time. Experiments are done on numerous open source benchmark circuits. Results show significant reduction in power and area by up to 1.47 times and 1.43 times compared to exact solutions and by up to 22% and 27% compared to state-of-the-art approximate logic synthesis tools while having orders of magnitudes lower run-time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge