Deep One-Class Classification Using Intra-Class Splitting

Paper and Code

Mar 12, 2019

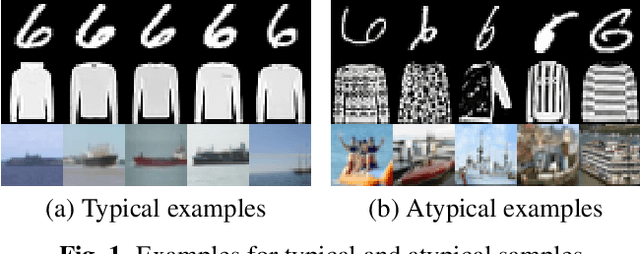

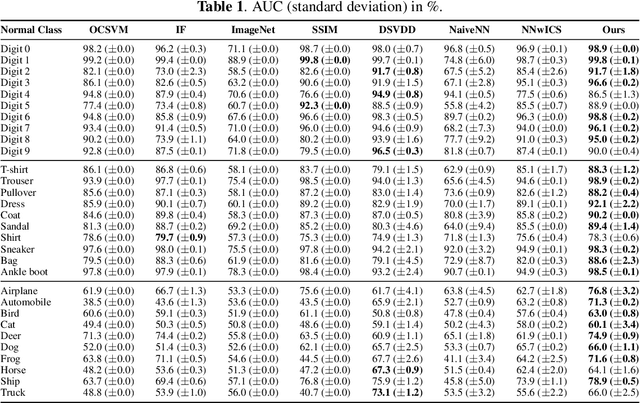

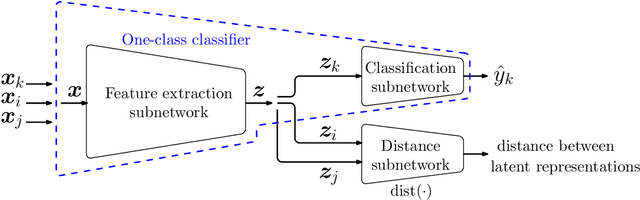

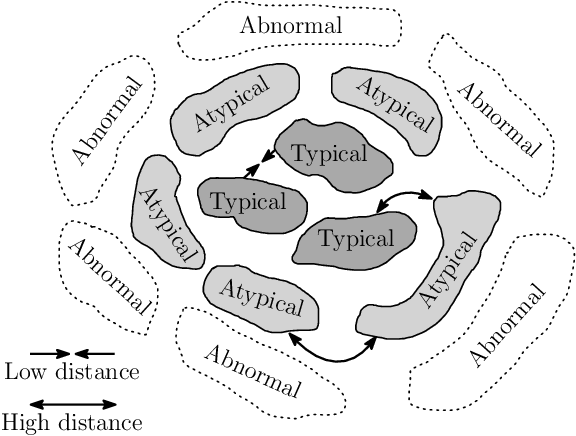

This paper introduces a generic method which enables to use conventional deep neural networks as end-to-end one-class classifiers. The method is based on splitting given data from one class into two subsets. In one-class classification, only samples of one normal class are available for training. During inference, a closed and tight decision boundary around the training samples is sought which conventional binary or multi-class neural networks are not able to provide. By splitting data into typical and atypical normal subsets, the proposed method can use a binary loss and defines an auxiliary subnetwork for distance constraints in the latent space. Various experiments on three well-known image datasets showed the effectiveness of the proposed method which outperformed seven baselines and had a better or comparable performance to the state-of-the-art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge