Deep Active Learning with Noisy Oracle in Object Detection

Paper and Code

Sep 30, 2023

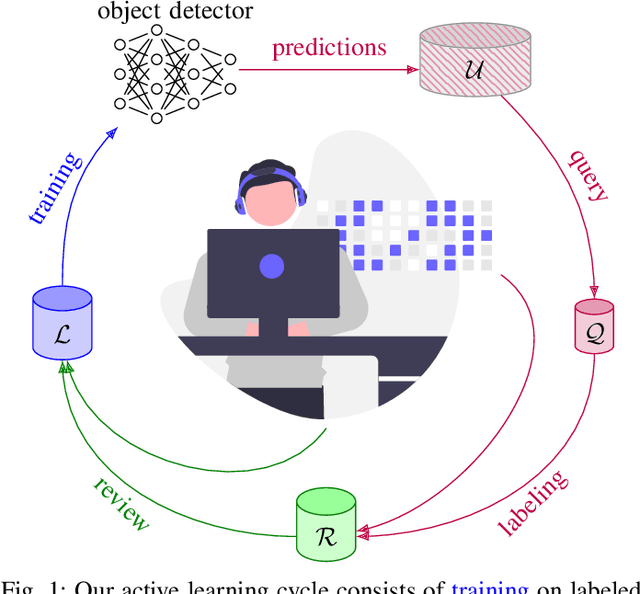

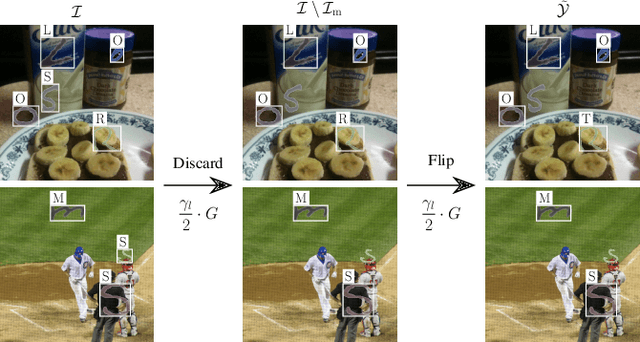

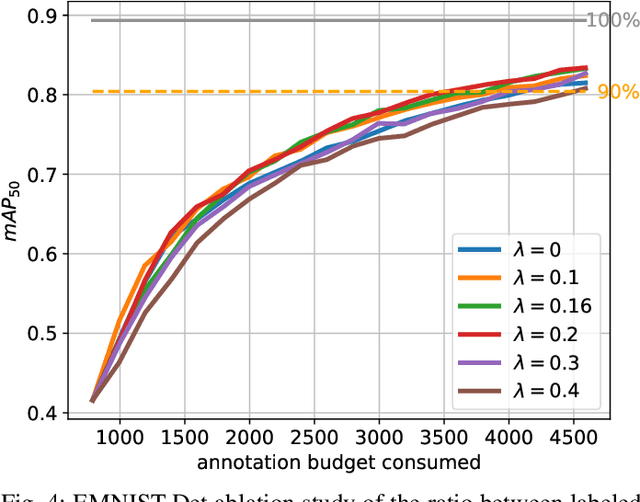

Obtaining annotations for complex computer vision tasks such as object detection is an expensive and time-intense endeavor involving a large number of human workers or expert opinions. Reducing the amount of annotations required while maintaining algorithm performance is, therefore, desirable for machine learning practitioners and has been successfully achieved by active learning algorithms. However, it is not merely the amount of annotations which influences model performance but also the annotation quality. In practice, the oracles that are queried for new annotations frequently contain significant amounts of noise. Therefore, cleansing procedures are oftentimes necessary to review and correct given labels. This process is subject to the same budget as the initial annotation itself since it requires human workers or even domain experts. Here, we propose a composite active learning framework including a label review module for deep object detection. We show that utilizing part of the annotation budget to correct the noisy annotations partially in the active dataset leads to early improvements in model performance, especially when coupled with uncertainty-based query strategies. The precision of the label error proposals has a significant influence on the measured effect of the label review. In our experiments we achieve improvements of up to 4.5 mAP points of object detection performance by incorporating label reviews at equal annotation budget.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge