DA-DRN: Degradation-Aware Deep Retinex Network for Low-Light Image Enhancement

Paper and Code

Oct 05, 2021

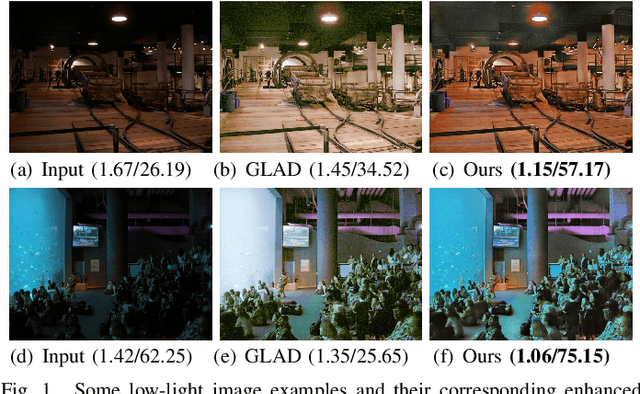

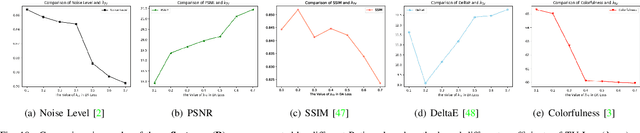

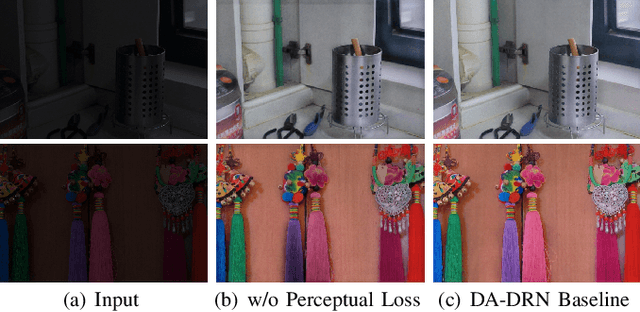

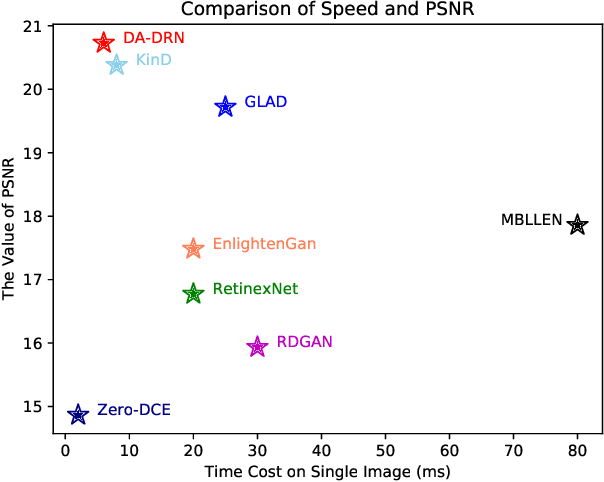

Images obtained in real-world low-light conditions are not only low in brightness, but they also suffer from many other types of degradation, such as color distortion, unknown noise, detail loss and halo artifacts. In this paper, we propose a Degradation-Aware Deep Retinex Network (denoted as DA-DRN) for low-light image enhancement and tackle the above degradation. Based on Retinex Theory, the decomposition net in our model can decompose low-light images into reflectance and illumination maps and deal with the degradation in the reflectance during the decomposition phase directly. We propose a Degradation-Aware Module (DA Module) which can guide the training process of the decomposer and enable the decomposer to be a restorer during the training phase without additional computational cost in the test phase. DA Module can achieve the purpose of noise removal while preserving detail information into the illumination map as well as tackle color distortion and halo artifacts. We introduce Perceptual Loss to train the enhancement network to generate the brightness-improved illumination maps which are more consistent with human visual perception. We train and evaluate the performance of our proposed model over the LOL real-world and LOL synthetic datasets, and we also test our model over several other frequently used datasets without Ground-Truth (LIME, DICM, MEF and NPE datasets). We conduct extensive experiments to demonstrate that our approach achieves a promising effect with good rubustness and generalization and outperforms many other state-of-the-art methods qualitatively and quantitatively. Our method only takes 7 ms to process an image with 600x400 resolution on a TITAN Xp GPU.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge