CRLF: Automatic Calibration and Refinement based on Line Feature for LiDAR and Camera in Road Scenes

Paper and Code

Mar 08, 2021

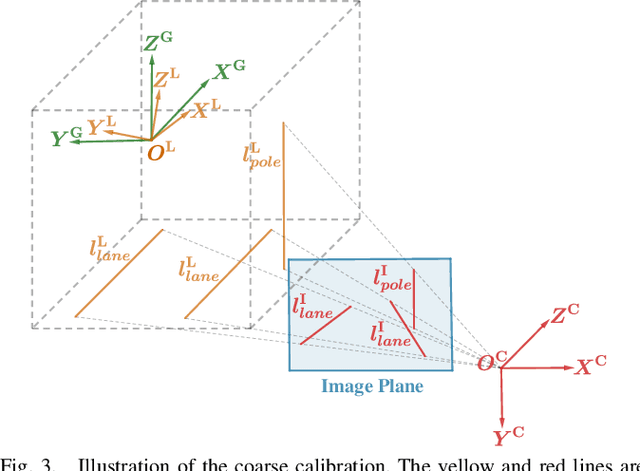

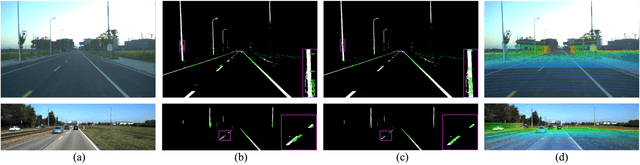

For autonomous vehicles, an accurate calibration for LiDAR and camera is a prerequisite for multi-sensor perception systems. However, existing calibration techniques require either a complicated setting with various calibration targets, or an initial calibration provided beforehand, which greatly impedes their applicability in large-scale autonomous vehicle deployment. To tackle these issues, we propose a novel method to calibrate the extrinsic parameter for LiDAR and camera in road scenes. Our method introduces line features from static straight-line-shaped objects such as road lanes and poles in both image and point cloud and formulates the initial calibration of extrinsic parameters as a perspective-3-lines (P3L) problem. Subsequently, a cost function defined under the semantic constraints of the line features is designed to perform refinement on the solved coarse calibration. The whole procedure is fully automatic and user-friendly without the need to adjust environment settings or provide an initial calibration. We conduct extensive experiments on KITTI and our in-house dataset, quantitative and qualitative results demonstrate the robustness and accuracy of our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge