Context vs Target Word: Quantifying Biases in Lexical Semantic Datasets

Paper and Code

Dec 13, 2021

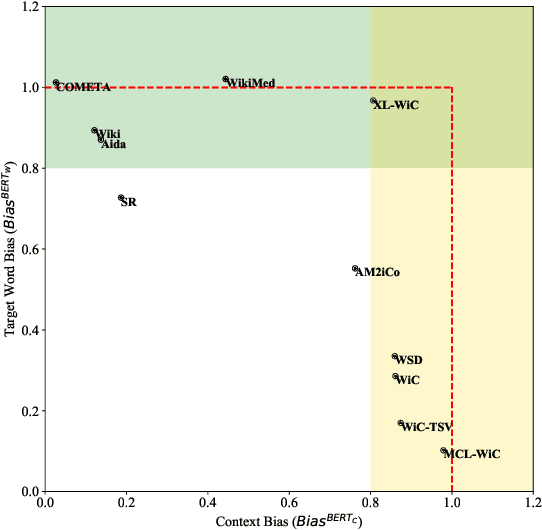

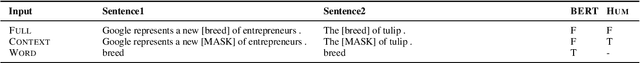

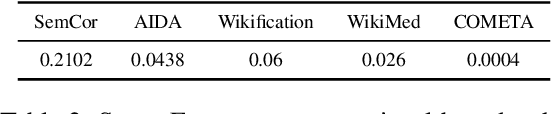

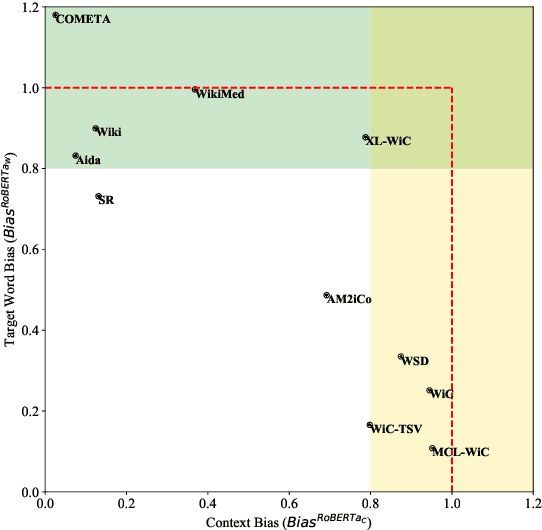

State-of-the-art contextualized models such as BERT use tasks such as WiC and WSD to evaluate their word-in-context representations. This inherently assumes that performance in these tasks reflect how well a model represents the coupled word and context semantics. This study investigates this assumption by presenting the first quantitative analysis (using probing baselines) on the context-word interaction being tested in major contextual lexical semantic tasks. Specifically, based on the probing baseline performance, we propose measures to calculate the degree of context or word biases in a dataset, and plot existing datasets on a continuum. The analysis shows most existing datasets fall into the extreme ends of the continuum (i.e. they are either heavily context-biased or target-word-biased) while only AM$^2$iCo and Sense Retrieval challenge a model to represent both the context and target words. Our case study on WiC reveals that human subjects do not share models' strong context biases in the dataset (humans found semantic judgments much more difficult when the target word is missing) and models are learning spurious correlations from context alone. This study demonstrates that models are usually not being tested for word-in-context representations as such in these tasks and results are therefore open to misinterpretation. We recommend our framework as sanity check for context and target word biases of future task design and application in lexical semantics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge