Constrained deep neural network architecture search for IoT devices accounting hardware calibration

Paper and Code

Sep 24, 2019

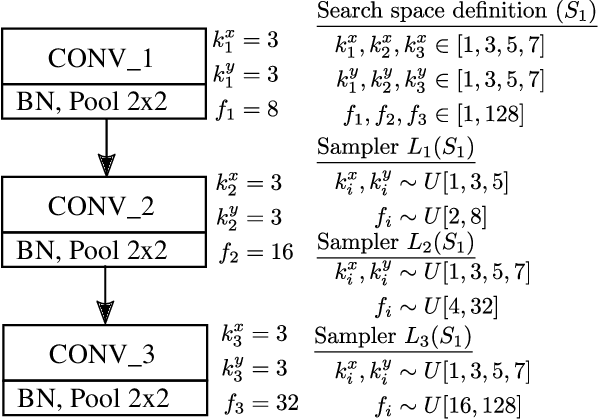

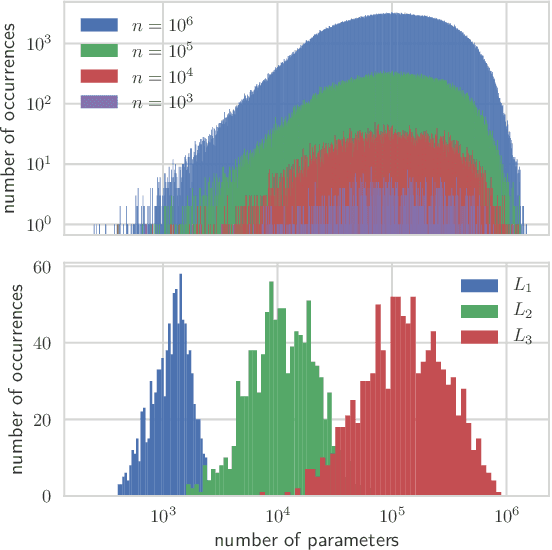

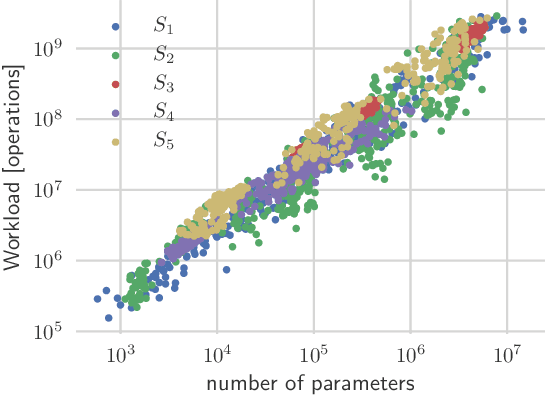

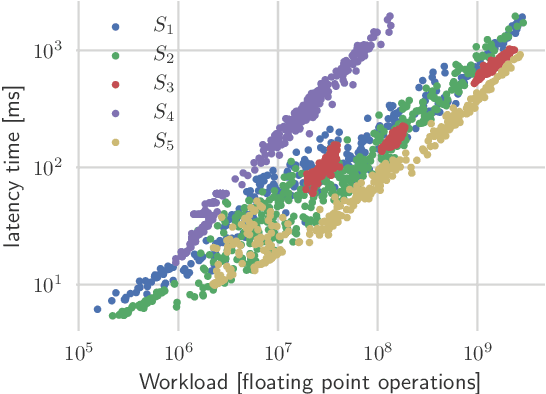

Deep neural networks achieve outstanding results in challenging image classification tasks. However, the design of network topologies is a complex task and the research community makes a constant effort in discovering top-accuracy topologies, either manually or employing expensive architecture searches. In this work, we propose a unique narrow-space architecture search that focuses on delivering low-cost and fast executing networks that respect strict memory and time requirements typical of Internet-of-Things (IoT) near-sensor computing platforms. Our approach provides solutions with classification latencies below 10ms running on a $35 device with 1GB RAM and 5.6GFLOPS peak performance. The narrow-space search of floating-point models improves the accuracy on CIFAR10 of an established IoT model from 70.64% to 74.87% respecting the same memory constraints. We further improve the accuracy to 82.07% by including 16-bit half types and we obtain the best accuracy of 83.45% by extending the search with model optimized IEEE 754 reduced types. To the best of our knowledge, we are the first that empirically demonstrate on over 3000 trained models that running with reduced precision pushes the Pareto optimal front by a wide margin. Under a given memory constraint, accuracy is improved by over 7% points for half and over 1% points further for running with the best model individual format.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge