Computer vision tasks for intelligent aerospace missions: An overview

Paper and Code

Jul 09, 2024

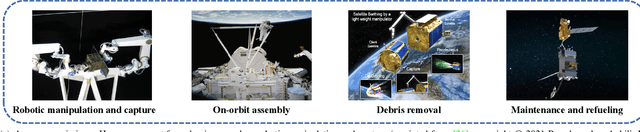

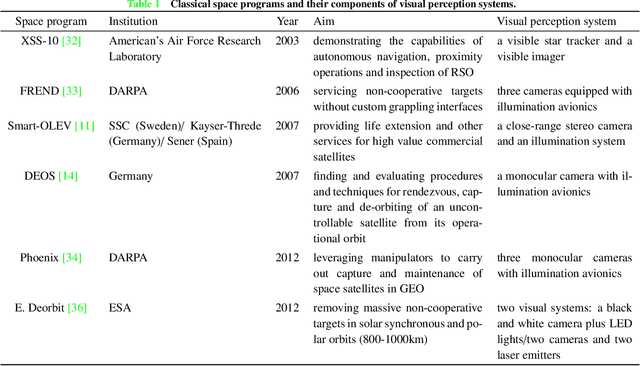

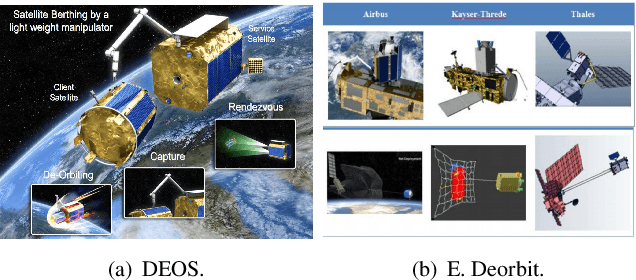

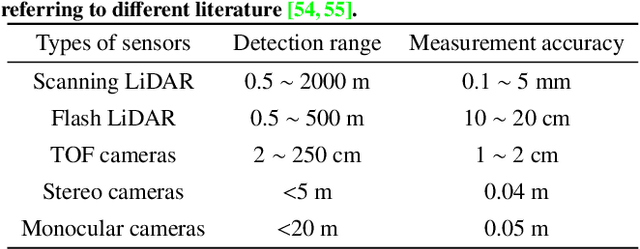

Computer vision tasks are crucial for aerospace missions as they help spacecraft to understand and interpret the space environment, such as estimating position and orientation, reconstructing 3D models, and recognizing objects, which have been extensively studied to successfully carry out the missions. However, traditional methods like Kalman Filtering, Structure from Motion, and Multi-View Stereo are not robust enough to handle harsh conditions, leading to unreliable results. In recent years, deep learning (DL)-based perception technologies have shown great potential and outperformed traditional methods, especially in terms of their robustness to changing environments. To further advance DL-based aerospace perception, various frameworks, datasets, and strategies have been proposed, indicating significant potential for future applications. In this survey, we aim to explore the promising techniques used in perception tasks and emphasize the importance of DL-based aerospace perception. We begin by providing an overview of aerospace perception, including classical space programs developed in recent years, commonly used sensors, and traditional perception methods. Subsequently, we delve into three fundamental perception tasks in aerospace missions: pose estimation, 3D reconstruction, and recognition, as they are basic and crucial for subsequent decision-making and control. Finally, we discuss the limitations and possibilities in current research and provide an outlook on future developments, including the challenges of working with limited datasets, the need for improved algorithms, and the potential benefits of multi-source information fusion.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge