Computationally efficient joint coordination of multiple electric vehicle charging points using reinforcement learning

Paper and Code

Mar 26, 2022

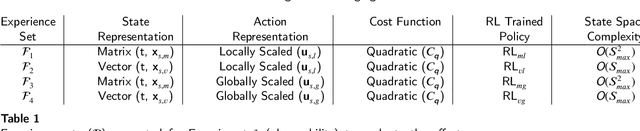

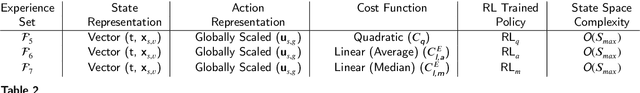

A major challenge in todays power grid is to manage the increasing load from electric vehicle (EV) charging. Demand response (DR) solutions aim to exploit flexibility therein, i.e., the ability to shift EV charging in time and thus avoid excessive peaks or achieve better balancing. Whereas the majority of existing research works either focus on control strategies for a single EV charger, or use a multi-step approach (e.g., a first high level aggregate control decision step, followed by individual EV control decisions), we rather propose a single-step solution that jointly coordinates multiple charging points at once. In this paper, we further refine an initial proposal using reinforcement learning (RL), specifically addressing computational challenges that would limit its deployment in practice. More precisely, we design a new Markov decision process (MDP) formulation of the EV charging coordination process, exhibiting only linear space and time complexity (as opposed to the earlier quadratic space complexity). We thus improve upon earlier state-of-the-art, demonstrating 30% reduction of training time in our case study using real-world EV charging session data. Yet, we do not sacrifice the resulting performance in meeting the DR objectives: our new RL solutions still improve the performance of charging demand coordination by 40-50% compared to a business-as-usual policy (that charges EV fully upon arrival) and 20-30% compared to a heuristic policy (that uniformly spreads individual EV charging over time).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge