Combining speakers of multiple languages to improve quality of neural voices

Paper and Code

Aug 17, 2021

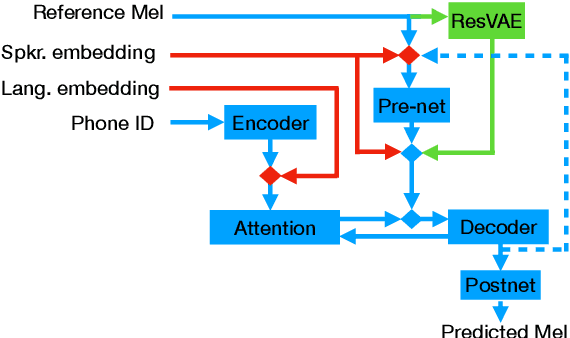

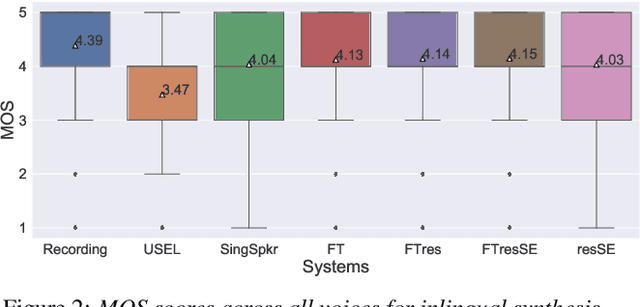

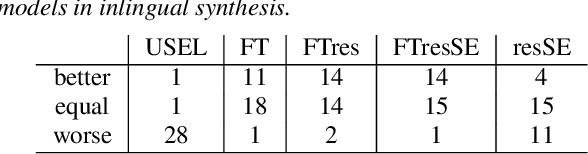

In this work, we explore multiple architectures and training procedures for developing a multi-speaker and multi-lingual neural TTS system with the goals of a) improving the quality when the available data in the target language is limited and b) enabling cross-lingual synthesis. We report results from a large experiment using 30 speakers in 8 different languages across 15 different locales. The system is trained on the same amount of data per speaker. Compared to a single-speaker model, when the suggested system is fine tuned to a speaker, it produces significantly better quality in most of the cases while it only uses less than $40\%$ of the speaker's data used to build the single-speaker model. In cross-lingual synthesis, on average, the generated quality is within $80\%$ of native single-speaker models, in terms of Mean Opinion Score.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge