CAS4DL: Christoffel Adaptive Sampling for function approximation via Deep Learning

Paper and Code

Aug 25, 2022

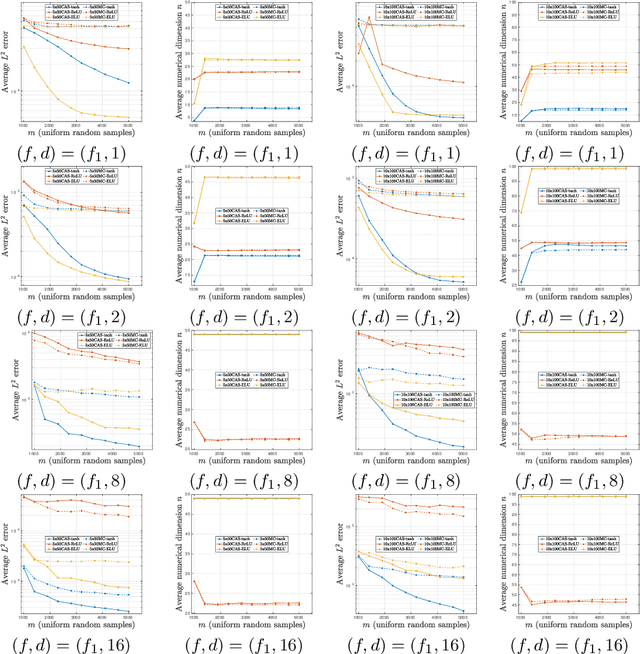

The problem of approximating smooth, multivariate functions from sample points arises in many applications in scientific computing, e.g., in computational Uncertainty Quantification (UQ) for science and engineering. In these applications, the target function may represent a desired quantity of interest of a parameterized Partial Differential Equation (PDE). Due to the large cost of solving such problems, where each sample is computed by solving a PDE, sample efficiency is a key concerning these applications. Recently, there has been increasing focus on the use of Deep Neural Networks (DNN) and Deep Learning (DL) for learning such functions from data. In this work, we propose an adaptive sampling strategy, CAS4DL (Christoffel Adaptive Sampling for Deep Learning) to increase the sample efficiency of DL for multivariate function approximation. Our novel approach is based on interpreting the second to last layer of a DNN as a dictionary of functions defined by the nodes on that layer. With this viewpoint, we then define an adaptive sampling strategy motivated by adaptive sampling schemes recently proposed for linear approximation schemes, wherein samples are drawn randomly with respect to the Christoffel function of the subspace spanned by this dictionary. We present numerical experiments comparing CAS4DL with standard Monte Carlo (MC) sampling. Our results demonstrate that CAS4DL often yields substantial savings in the number of samples required to achieve a given accuracy, particularly in the case of smooth activation functions, and it shows a better stability in comparison to MC. These results therefore are a promising step towards fully adapting DL towards scientific computing applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge