CAP: instance complexity-aware network pruning

Paper and Code

Sep 08, 2022

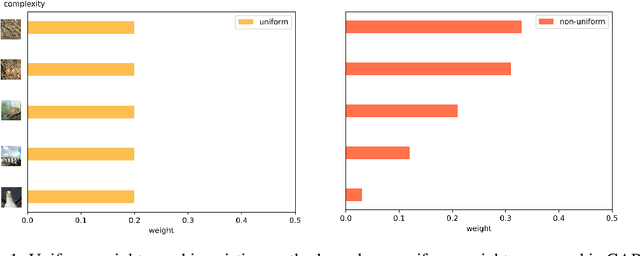

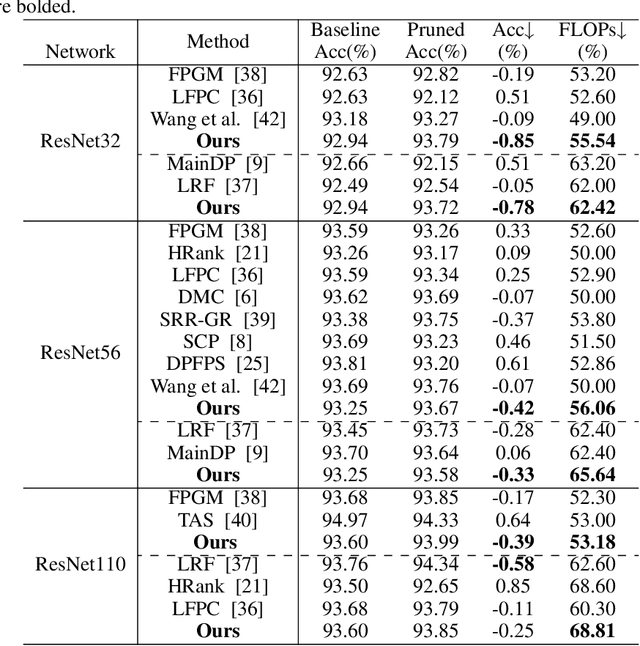

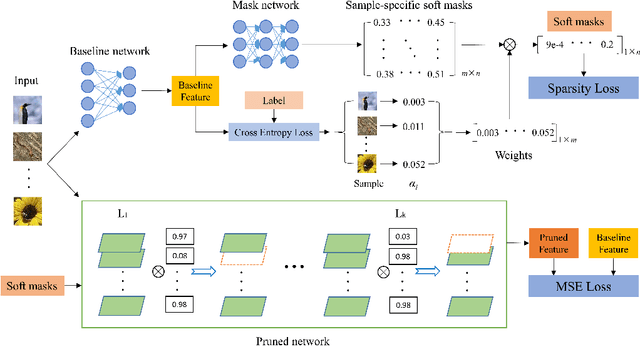

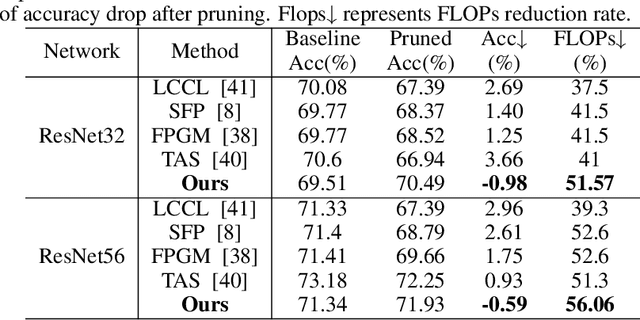

Existing differentiable channel pruning methods often attach scaling factors or masks behind channels to prune filters with less importance, and assume uniform contribution of input samples to filter importance. Specifically, the effects of instance complexity on pruning performance are not yet fully investigated. In this paper, we propose a simple yet effective differentiable network pruning method CAP based on instance complexity-aware filter importance scores. We define instance complexity related weight for each sample by giving higher weights to hard samples, and measure the weighted sum of sample-specific soft masks to model non-uniform contribution of different inputs, which encourages hard samples to dominate the pruning process and the model performance to be well preserved. In addition, we introduce a new regularizer to encourage polarization of the masks, such that a sweet spot can be easily found to identify the filters to be pruned. Performance evaluations on various network architectures and datasets demonstrate CAP has advantages over the state-of-the-arts in pruning large networks. For instance, CAP improves the accuracy of ResNet56 on CIFAR-10 dataset by 0.33% aftering removing 65.64% FLOPs, and prunes 87.75% FLOPs of ResNet50 on ImageNet dataset with only 0.89% Top-1 accuracy loss.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge