Camera-Pose Robust Crater Detection from Chang'e 5

Paper and Code

Jun 07, 2024

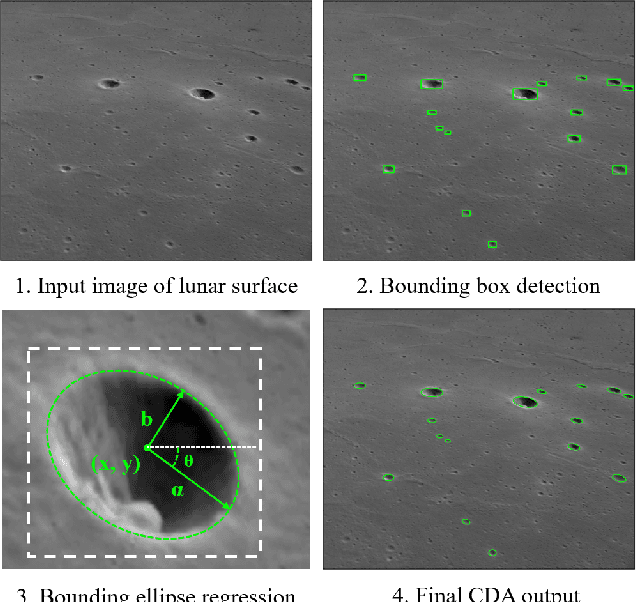

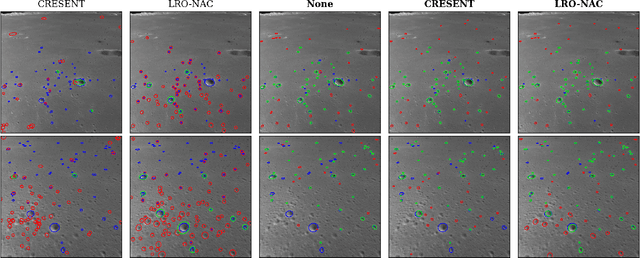

As space missions aim to explore increasingly hazardous terrain, accurate and timely position estimates are required to ensure safe navigation. Vision-based navigation achieves this goal through correlating impact craters visible through onboard imagery with a known database to estimate a craft's pose. However, existing literature has not sufficiently evaluated crater-detection algorithm (CDA) performance from imagery containing off-nadir view angles. In this work, we evaluate the performance of Mask R-CNN for crater detection, comparing models pretrained on simulated data containing off-nadir view angles and to pretraining on real-lunar images. We demonstrate pretraining on real-lunar images is superior despite the lack of images containing off-nadir view angles, achieving detection performance of 63.1 F1-score and ellipse-regression performance of 0.701 intersection over union. This work provides the first quantitative analysis of performance of CDAs on images containing off-nadir view angles. Towards the development of increasingly robust CDAs, we additionally provide the first annotated CDA dataset with off-nadir view angles from the Chang'e 5 Landing Camera.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge