BURG-Toolkit: Robot Grasping Experiments in Simulation and the Real World

Paper and Code

May 27, 2022

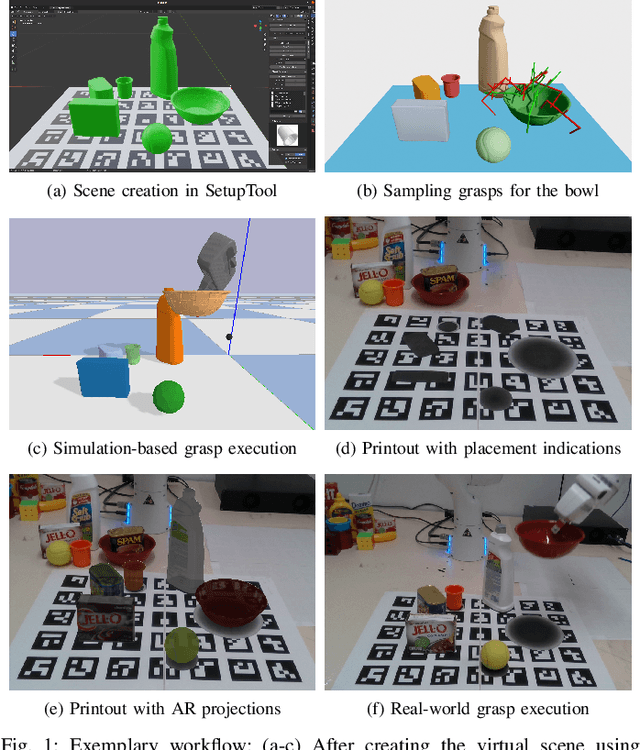

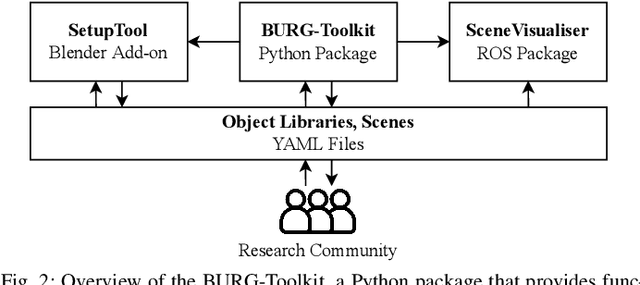

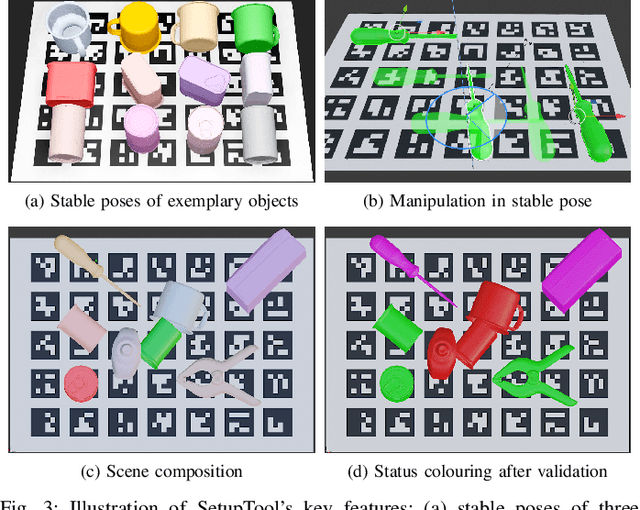

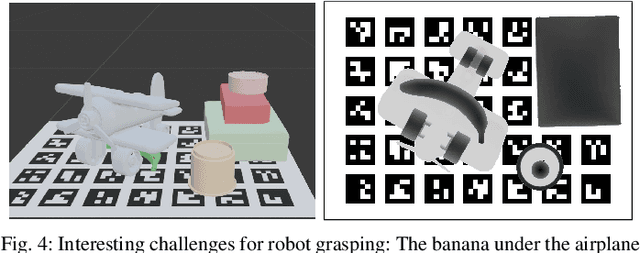

This paper presents BURG-Toolkit, a set of open-source tools for Benchmarking and Understanding Robotic Grasping. Our tools allow researchers to: (1) create virtual scenes for generating training data and performing grasping in simulation; (2) recreate the scene by arranging the corresponding objects accurately in the physical world for real robot experiments, supporting an analysis of the sim-to-real gap; and (3) share the scenes with other researchers to foster comparability and reproducibility of experimental results. We explain how to use our tools by describing some potential use cases. We further provide proof-of-concept experimental results quantifying the sim-to-real gap for robot grasping in some example scenes. The tools are available at: https://mrudorfer.github.io/burg-toolkit/

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge