Building Decision Making Models Through Language Model Regime

Paper and Code

Aug 12, 2024

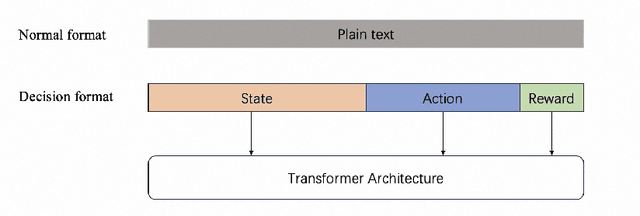

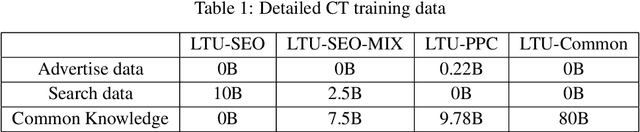

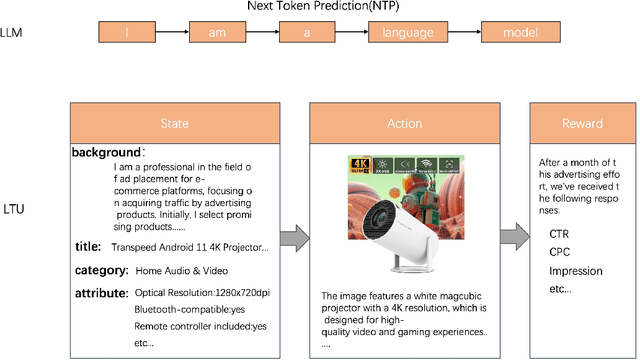

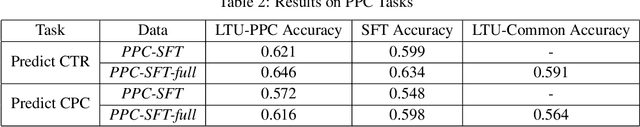

We propose a novel approach for decision making problems leveraging the generalization capabilities of large language models (LLMs). Traditional methods such as expert systems, planning algorithms, and reinforcement learning often exhibit limited generalization, typically requiring the training of new models for each unique task. In contrast, LLMs demonstrate remarkable success in generalizing across varied language tasks, inspiring a new strategy for training decision making models. Our approach, referred to as "Learning then Using" (LTU), entails a two-stage process. Initially, the \textit{learning} phase develops a robust foundational decision making model by integrating diverse knowledge from various domains and decision making contexts. The subsequent \textit{using} phase refines this foundation model for specific decision making scenarios. Distinct from other studies that employ LLMs for decision making through supervised learning, our LTU method embraces a versatile training methodology that combines broad pre-training with targeted fine-tuning. Experiments in e-commerce domains such as advertising and search optimization have shown that LTU approach outperforms traditional supervised learning regimes in decision making capabilities and generalization. The LTU approach is the first practical training architecture for both single-step and multi-step decision making tasks combined with LLMs, which can be applied beyond game and robot domains. It provides a robust and adaptable framework for decision making, enhances the effectiveness and flexibility of various systems in tackling various challenges.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge