Bridging Theory and Algorithm for Domain Adaptation

Paper and Code

Apr 11, 2019

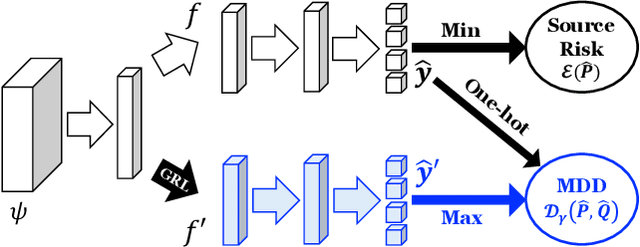

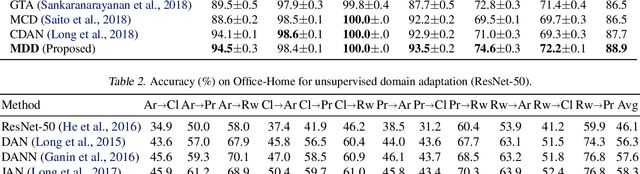

This paper addresses the problem of unsupervised domain adaption from theoretical and algorithmic perspectives. Existing domain adaptation theories naturally imply minimax optimization algorithms, which connect well with the adversarial-learning based domain adaptation methods. However, several disconnections still form the gap between theory and algorithm. We extend previous theories (Ben-David et al., 2010; Mansour et al., 2009c) to multiclass classification in domain adaptation, where classifiers based on scoring functions and margin loss are standard algorithmic choices. We introduce a novel measurement, margin disparity discrepancy, that is tailored both to distribution comparison with asymmetric margin loss, and to minimax optimization for easier training. Using this discrepancy, we derive new generalization bounds in terms of Rademacher complexity. Our theory can be seamlessly transformed into an adversarial learning algorithm for domain adaptation, successfully bridging the gap between theory and algorithm. A series of empirical studies show that our algorithm achieves the state-of-the-art accuracies on challenging domain adaptation tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge