Breaking Transferability of Adversarial Samples with Randomness

Paper and Code

Jun 17, 2018

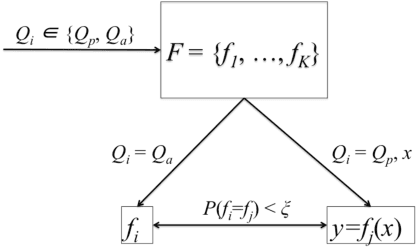

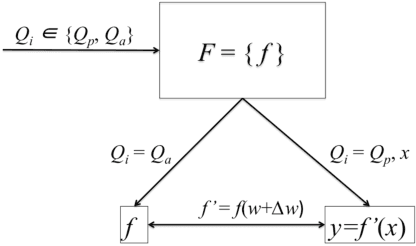

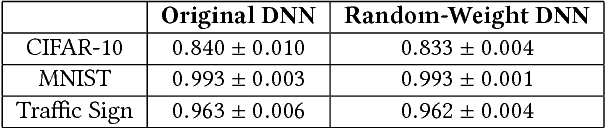

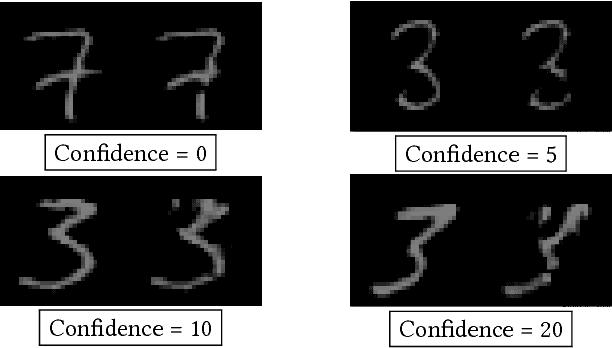

We investigate the role of transferability of adversarial attacks in the observed vulnerabilities of Deep Neural Networks (DNNs). We demonstrate that introducing randomness to the DNN models is sufficient to defeat adversarial attacks, given that the adversary does not have an unlimited attack budget. Instead of making one specific DNN model robust to perfect knowledge attacks (a.k.a, white box attacks), creating randomness within an army of DNNs completely eliminates the possibility of perfect knowledge acquisition, resulting in a significantly more robust DNN ensemble against the strongest form of attacks. We also show that when the adversary has an unlimited budget of data perturbation, all defensive techniques would eventually break down as the budget increases. Therefore, it is important to understand the game saddle point where the adversary would not further pursue this endeavor. Furthermore, we explore the relationship between attack severity and decision boundary robustness in the version space. We empirically demonstrate that by simply adding a small Gaussian random noise to the learned weights, a DNN model can increase its resilience to adversarial attacks by as much as 74.2%. More importantly, we show that by randomly activating/revealing a model from a pool of pre-trained DNNs at each query request, we can put a tremendous strain on the adversary's attack strategies. We compare our randomization techniques to the Ensemble Adversarial Training technique and show that our randomization techniques are superior under different attack budget constraints.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge