Breaking Feedback Loops in Recommender Systems with Causal Inference

Paper and Code

Jul 15, 2022

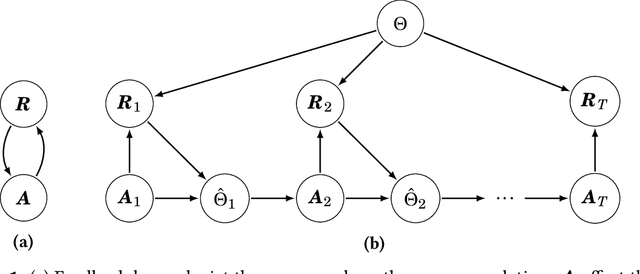

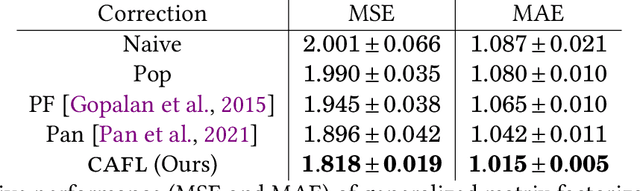

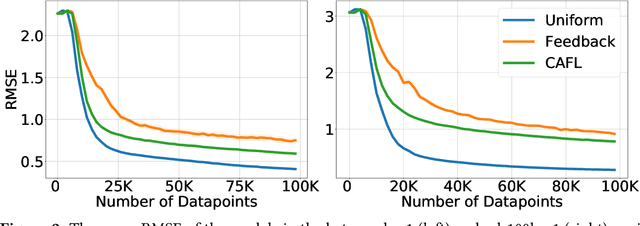

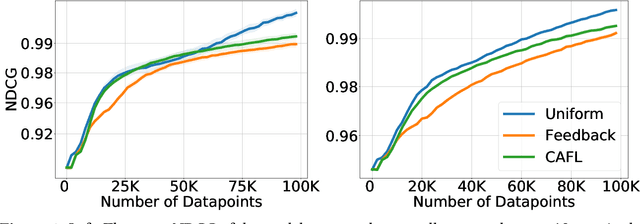

Recommender systems play a key role in shaping modern web ecosystems. These systems alternate between (1) making recommendations (2) collecting user responses to these recommendations, and (3) retraining the recommendation algorithm based on this feedback. During this process the recommender system influences the user behavioral data that is subsequently used to update it, thus creating a feedback loop. Recent work has shown that feedback loops may compromise recommendation quality and homogenize user behavior, raising ethical and performance concerns when deploying recommender systems. To address these issues, we propose the Causal Adjustment for Feedback Loops (CAFL), an algorithm that provably breaks feedback loops using causal inference and can be applied to any recommendation algorithm that optimizes a training loss. Our main observation is that a recommender system does not suffer from feedback loops if it reasons about causal quantities, namely the intervention distributions of recommendations on user ratings. Moreover, we can calculate this intervention distribution from observational data by adjusting for the recommender system's predictions of user preferences. Using simulated environments, we demonstrate that CAFL improves recommendation quality when compared to prior correction methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge