Bayesian Zero-Shot Learning

Paper and Code

Jul 25, 2019

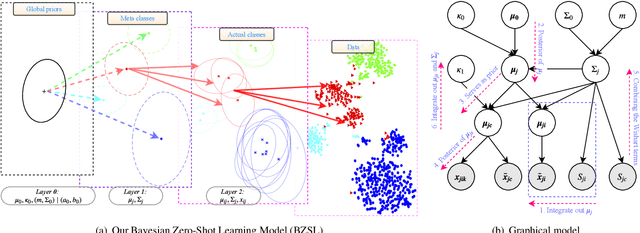

Object classes that surround us have a natural tendency to emerge at varying levels of abstraction. We propose a Bayesian approach to zero-shot learning (ZSL) that introduces the notion of meta-classes and implements a Bayesian hierarchy around these classes to effectively blend data likelihood with local and global priors. Local priors driven by data from seen classes, i.e. classes that are available at training time, become instrumental in recovering unseen classes, i.e. classes that are missing at training time, in a generalized ZSL setting. Hyperparameters of the Bayesian model offer a convenient way to optimize the trade-off between seen and unseen class accuracy in addition to guiding other aspects of model fitting. We conduct experiments on seven benchmark datasets including the large scale ImageNet and show that our model improves the current state of the art in the challenging generalized ZSL setting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge