Bayesian Learning for Disparity Map Refinement for Semi-Dense Active Stereo Vision

Paper and Code

Sep 12, 2022

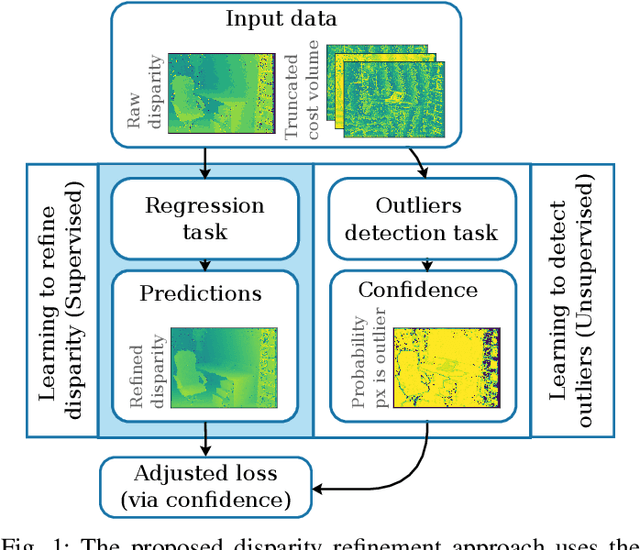

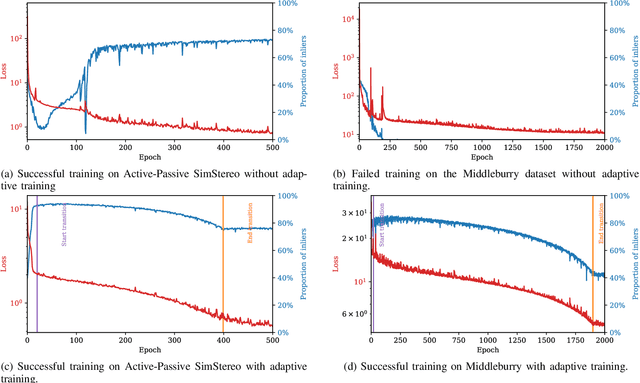

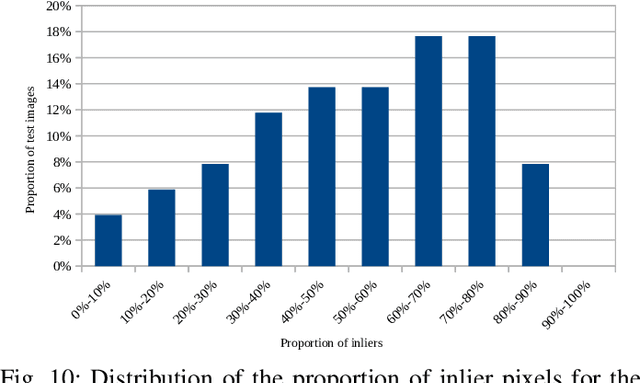

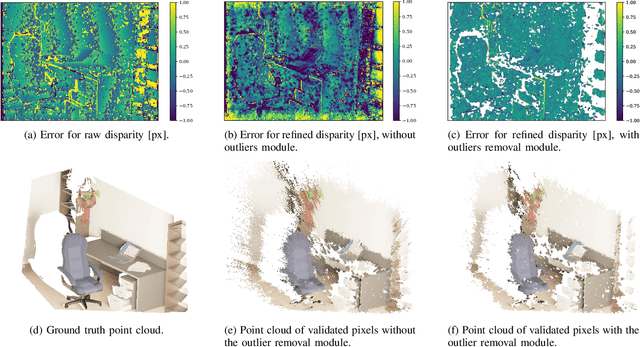

A major focus of recent developments in stereo vision has been on how to obtain accurate dense disparity maps in passive stereo vision. Active vision systems enable more accurate estimations of dense disparity compared to passive stereo. However, subpixel-accurate disparity estimation remains an open problem that has received little attention. In this paper, we propose a new learning strategy to train neural networks to estimate high-quality subpixel disparity maps for semi-dense active stereo vision. The key insight is that neural networks can double their accuracy if they are able to jointly learn how to refine the disparity map while invalidating the pixels where there is insufficient information to correct the disparity estimate. Our approach is based on Bayesian modeling where validated and invalidated pixels are defined by their stochastic properties, allowing the model to learn how to choose by itself which pixels are worth its attention. Using active stereo datasets such as Active-Passive SimStereo, we demonstrate that the proposed method outperforms the current state-of-the-art active stereo models. We also demonstrate that the proposed approach compares favorably with state-of-the-art passive stereo models on the Middlebury dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge