Balancing Performance and Energy Consumption of Bagging Ensembles for the Classification of Data Streams in Edge Computing

Paper and Code

Jan 17, 2022

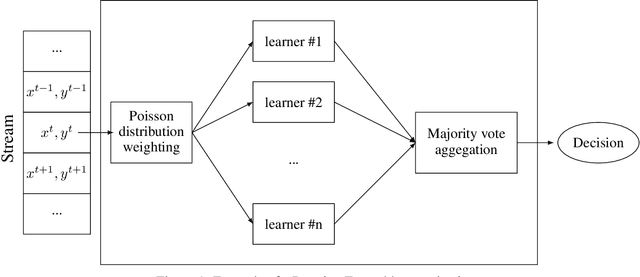

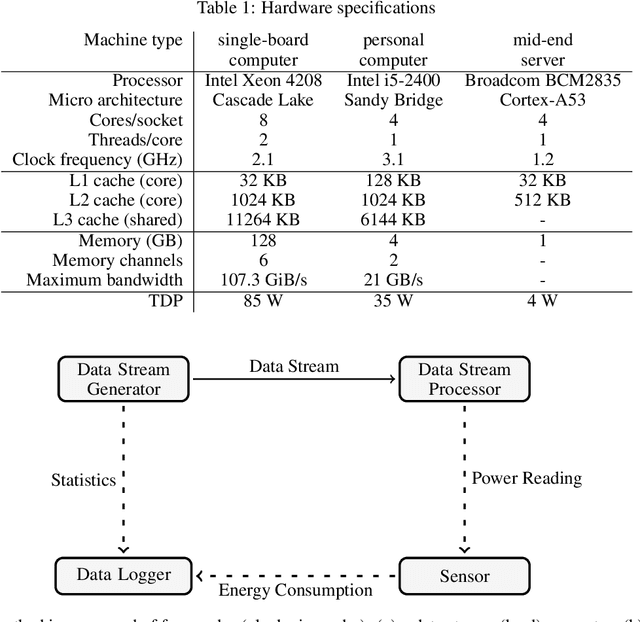

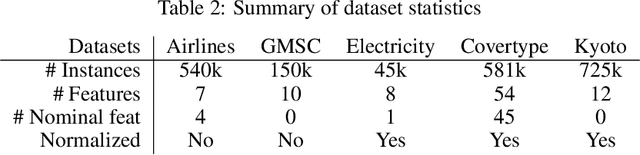

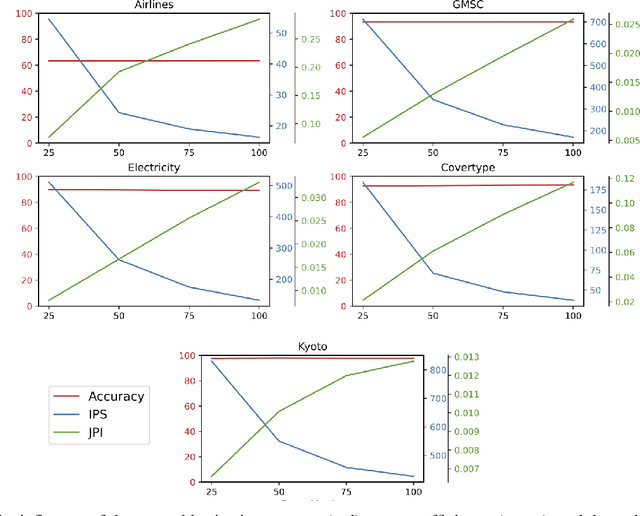

In recent years, the Edge Computing (EC) paradigm has emerged as an enabling factor for developing technologies like the Internet of Things (IoT) and 5G networks, bridging the gap between Cloud Computing services and end-users, supporting low latency, mobility, and location awareness to delay-sensitive applications. Most solutions in EC employ machine learning (ML) methods to perform data classification and other information processing tasks on continuous and evolving data streams. Usually, such solutions have to cope with vast amounts of data that come as data streams while balancing energy consumption, latency, and the predictive performance of the algorithms. Ensemble methods achieve remarkable predictive performance when applied to evolving data streams due to the combination of several models and the possibility of selective resets. This work investigates strategies for optimizing the performance (i.e., delay, throughput) and energy consumption of bagging ensembles to classify data streams. The experimental evaluation involved six state-of-art ensemble algorithms (OzaBag, OzaBag Adaptive Size Hoeffding Tree, Online Bagging ADWIN, Leveraging Bagging, Adaptive RandomForest, and Streaming Random Patches) applying five widely used machine learning benchmark datasets with varied characteristics on three computer platforms. Such strategies can significantly reduce energy consumption in 96% of the experimental scenarios evaluated. Despite the trade-offs, it is possible to balance them to avoid significant loss in predictive performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge