Backprop as Functor: A compositional perspective on supervised learning

Paper and Code

Dec 13, 2017

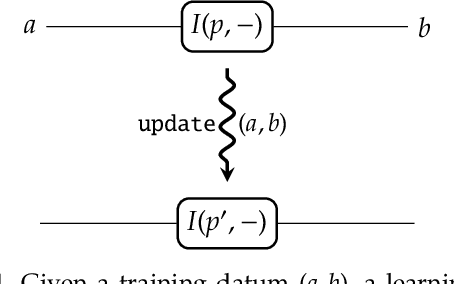

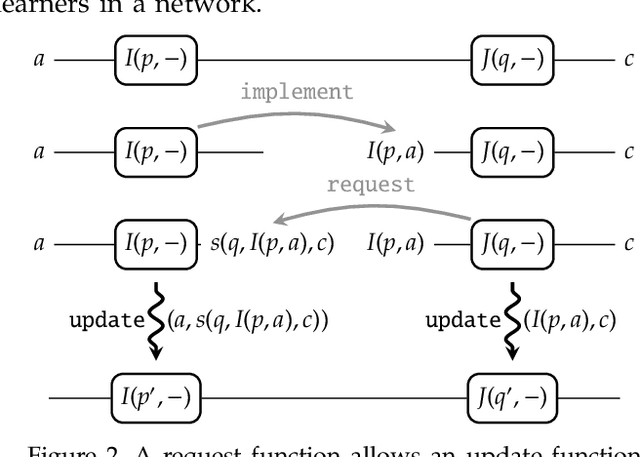

A supervised learning algorithm searches over a set of functions $A \to B$ parametrised by a space $P$ to find the best approximation to some ideal function $f\colon A \to B$. It does this by taking examples $(a,f(a)) \in A\times B$, and updating the parameter according to some rule. We define a category where these update rules may be composed, and show that gradient descent---with respect to a fixed step size and an error function satisfying a certain property---defines a monoidal functor from a category of parametrised functions to this category of update rules. This provides a structural perspective on backpropagation, as well as a broad generalisation of neural networks.

* 33 pages

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge