Baby's CoThought: Leveraging Large Language Models for Enhanced Reasoning in Compact Models

Paper and Code

Aug 03, 2023

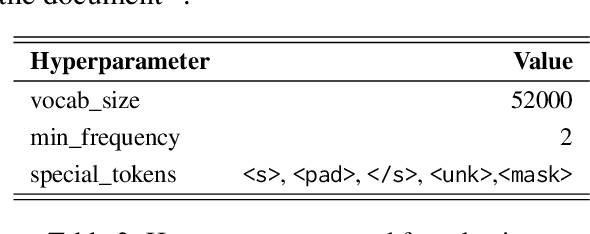

Large Language Models (LLMs) demonstrate remarkable performance on a variety of Natural Language Understanding (NLU) tasks, primarily due to their in-context learning ability. This ability is utilized in our proposed "CoThought" pipeline, which efficiently trains smaller "baby" language models (BabyLMs) by leveraging the Chain of Thought (CoT) prompting of LLMs. Our pipeline restructures a dataset of less than 100M in size using GPT-3.5-turbo, transforming it into task-oriented, human-readable texts that are comparable to the school texts for language learners. The BabyLM is then pretrained on this restructured dataset in a RoBERTa (Liu et al., 2019) fashion. In evaluations across 4 benchmarks, our BabyLM outperforms the RoBERTa-base in 10 linguistic, NLU, and question answering tasks by more than 3 points, showing superior ability to extract contextual information. These results suggest that compact LMs pretrained on small, LLM-restructured data can better understand tasks and achieve improved performance. The code for data processing and model training is available at: https://github.com/oooranz/Baby-CoThought.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge