BA-Net: Bridge Attention for Deep Convolutional Neural Networks

Paper and Code

Dec 08, 2021

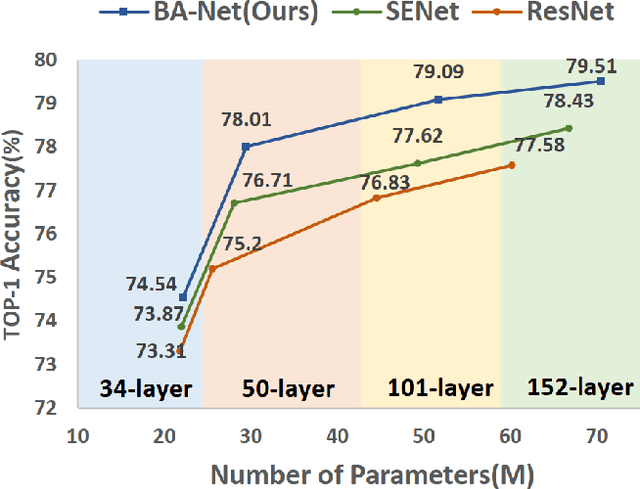

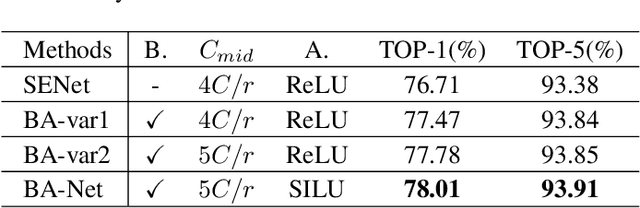

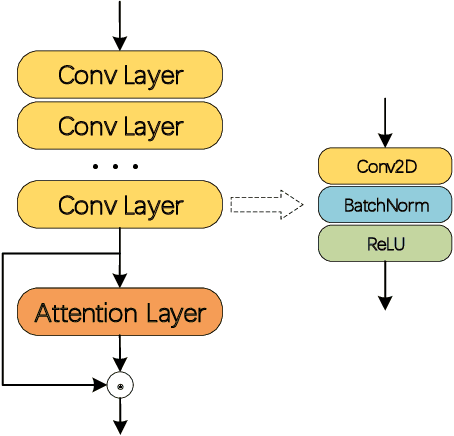

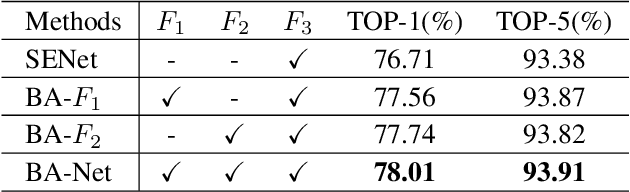

In recent years, channel attention mechanism is widely investigated for its great potential in improving the performance of deep convolutional neural networks (CNNs). However, in most existing methods, only the output of the adjacent convolution layer is fed to the attention layer for calculating the channel weights. Information from other convolution layers is ignored. With these observations, a simple strategy, named Bridge Attention Net (BA-Net), is proposed for better channel attention mechanisms. The main idea of this design is to bridge the outputs of the previous convolution layers through skip connections for channel weights generation. BA-Net can not only provide richer features to calculate channel weight when feedforward, but also multiply paths of parameters updating when backforward. Comprehensive evaluation demonstrates that the proposed approach achieves state-of-the-art performance compared with the existing methods in regards to accuracy and speed. Bridge Attention provides a fresh perspective on the design of neural network architectures and shows great potential in improving the performance of the existing channel attention mechanisms. The code is available at \url{https://github.com/zhaoy376/Attention-mechanism

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge