Automatically Generating Counterfactuals for Relation Classification

Paper and Code

Mar 10, 2022

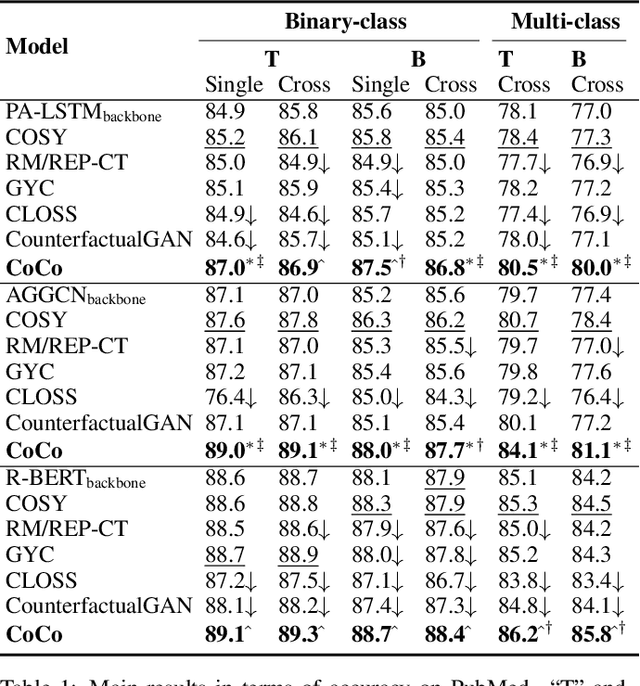

The goal of relation classification (RC) is to extract the semantic relations between/among entities in the text. As a fundamental task in natural language processing, it is crucial to ensure the robustness of RC models. Despite the high accuracy current deep neural models have achieved in RC tasks, they are easily affected by spurious correlations. One solution to this problem is to train the model with counterfactually augmented data (CAD) such that it can learn the causation rather than the confounding. However, no attempt has been made on generating counterfactuals for RC tasks. In this paper, we formulate the problem of automatically generating CAD for RC tasks from an entity-centric viewpoint, and develop a novel approach to derive contextual counterfactuals for entities. Specifically, we exploit two elementary topological properties, i.e., the centrality and the shortest path, in syntactic and semantic dependency graphs, to first identify and then intervene on the contextual causal features for entities. We conduct a comprehensive evaluation on four RC datasets by combining our proposed approach with a variety of backbone RC models. The results demonstrate that our approach not only improves the performance of the backbones, but also makes them more robust in the out-of-domain test.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge