Automatic Detection of Myocontrol Failures Based upon Situational Context Information

Paper and Code

Jun 27, 2019

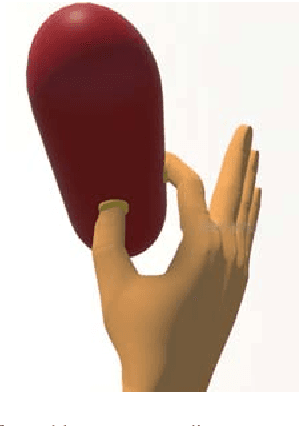

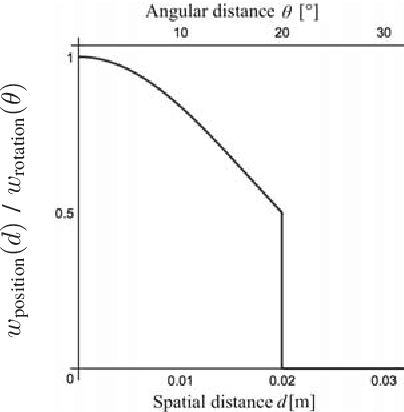

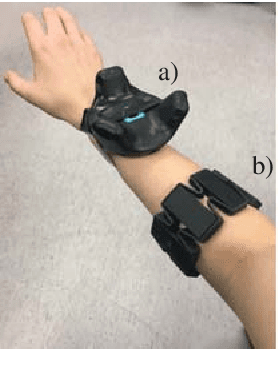

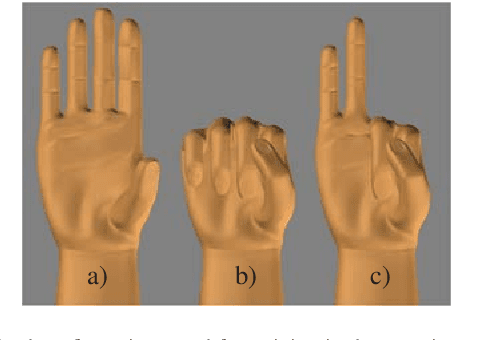

Myoelectric control systems for assistive devices are still unreliable. The user's input signals can become unstable over time due to e.g. fatigue, electrode displacement, or sweat. Hence, such controllers need to be constantly updated and heavily rely on user feedback. In this paper, we present an automatic failure detection method which learns when plausible predictions become unreliable and model updates are necessary. Our key insight is to enhance the control system with a set of generative models that learn sensible behaviour for a desired task from human demonstration. We illustrate our approach on a grasping scenario in Virtual Reality, in which the user is asked to grasp a bottle on a table. From demonstration our model learns the reach-to-grasp motion from a resting position to two grasps (power grasp and tridigital grasp) and how to predict the most adequate grasp from local context, e.g. tridigital grasp on the bottle cap or around the bottleneck. By measuring the error between new grasp attempts and the model prediction, the system can effectively detect which input commands do not reflect the user's intention. We evaluated our model in two cases: i) with both position and rotation information of the wrist pose, and ii) with only rotational information. Our results show that our approach detects statistically highly significant differences in error distributions with p < 0.001 between successful and failed grasp attempts in both cases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge