autoAx: An Automatic Design Space Exploration and Circuit Building Methodology utilizing Libraries of Approximate Components

Paper and Code

Apr 01, 2019

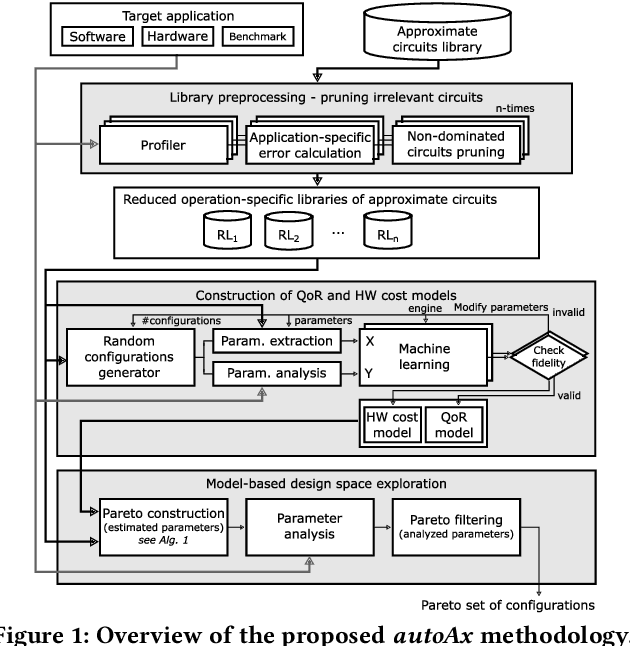

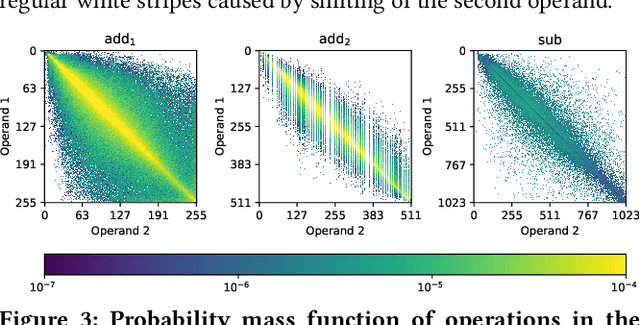

Approximate computing is an emerging paradigm for developing highly energy-efficient computing systems such as various accelerators. In the literature, many libraries of elementary approximate circuits have already been proposed to simplify the design process of approximate accelerators. Because these libraries contain from tens to thousands of approximate implementations for a single arithmetic operation it is intractable to find an optimal combination of approximate circuits in the library even for an application consisting of a few operations. An open problem is "how to effectively combine circuits from these libraries to construct complex approximate accelerators". This paper proposes a novel methodology for searching, selecting and combining the most suitable approximate circuits from a set of available libraries to generate an approximate accelerator for a given application. To enable fast design space generation and exploration, the methodology utilizes machine learning techniques to create computational models estimating the overall quality of processing and hardware cost without performing full synthesis at the accelerator level. Using the methodology, we construct hundreds of approximate accelerators (for a Sobel edge detector) showing different but relevant tradeoffs between the quality of processing and hardware cost and identify a corresponding Pareto-frontier. Furthermore, when searching for approximate implementations of a generic Gaussian filter consisting of 17 arithmetic operations, the proposed approach allows us to identify approximately $10^3$ highly important implementations from $10^{23}$ possible solutions in a few hours, while the exhaustive search would take four months on a high-end processor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge